Real CCR1072 experience?

Has anyone at least tuched the subj already ( except guys from http://www.stubarea51.net/ )?

There is a bit more info in our latest pod cast

https://youtu.be/QGjqjltwePU

Is there something specific you want to know about?

https://youtu.be/QGjqjltwePU

Is there something specific you want to know about?

Re: Real CCR1072 experience?

Thanks.

Nothing special...

I need only 3 bgp sessions, to route 5-6 Gbits of traffic. So someone's real experience of using really new device will be helpful.

Nothing special...

I need only 3 bgp sessions, to route 5-6 Gbits of traffic. So someone's real experience of using really new device will be helpful.

Re: Real CCR1072 experience?

I got one humming next to me right now.

first i used it as a traffic generator to test a 100G connection using /tool traffic-generator.

i managed to get it pump all interfaces up to 10G traffic (using uninitialised packet payload)

in each direction. i was using port pairs wired in loop, so sfp1<->sfp2, sfp3<->sfp4.. and so on,

and every port was configured to send 10Gbps out. it was running there for a whole day.

although my goal was to test another system with it, i was pretty much impressed with the

bang-for-bucks ratio and the reliability. all the power this pizza box can deliver can silence

10x more expensive gears from other vendors.

right now i have 2-3 plans i my mind where we can use a box like this in our SP network [which is not built from Mikrotik devices].

some generic thoughts:

- it boots as any other device, so it may take up to 55-60 sec to get it running after power-up.

- the software upgrade takes not longer than 60-70 seconds, which is very good compared to

some cisco IOS XR boxes, which need 10s of minutes to be back and a very complicated upgrade procedure

with multiple reloads.

- the fans make some noise even with 0 load, so this is not a "desktop" router for the office [anyway who needs that much power at the deskside]

- surprisingly deep (ca. 10cms deeper than the other CCRs) and heavy

- internals look very clean (i still have no idea what to use the two SSDs for), i especially like the copper mikrotik logo on the PCB. something similar was hidden in the CRS125 under the heatsink.

- there is an internal s232 header on the board (/port print shows it)

- the power supplies are nice, easy to insert/remove, and i really like the cable clamps which keep the power cables from accidental fall/pull-out

- at last a full sized USB host port!

there are some negatives too:

- the touchscreen sometimes get laggy. but that's something that you see on all devices.

- informative stats display incorrect voltage (0V) even with both PS connected.

- power supplies are only connected with their output power feeds (12V) which it a very simple design but no extra information can be extracted from it (like: serial numbers, input status) so one cannot distinguish whether the PS breaks or the feed is disconnected

- i know, mactelnet is always there, but i'd like to have an USB console. say connect a cheap USB-B to Serial to the internal serial headers, route it out to the front to a type-B socket and you don't need that bulky serial adapters for your notebook.

if you really need forwarding power, this is an instant buy.

first i used it as a traffic generator to test a 100G connection using /tool traffic-generator.

i managed to get it pump all interfaces up to 10G traffic (using uninitialised packet payload)

in each direction. i was using port pairs wired in loop, so sfp1<->sfp2, sfp3<->sfp4.. and so on,

and every port was configured to send 10Gbps out. it was running there for a whole day.

although my goal was to test another system with it, i was pretty much impressed with the

bang-for-bucks ratio and the reliability. all the power this pizza box can deliver can silence

10x more expensive gears from other vendors.

right now i have 2-3 plans i my mind where we can use a box like this in our SP network [which is not built from Mikrotik devices].

some generic thoughts:

- it boots as any other device, so it may take up to 55-60 sec to get it running after power-up.

- the software upgrade takes not longer than 60-70 seconds, which is very good compared to

some cisco IOS XR boxes, which need 10s of minutes to be back and a very complicated upgrade procedure

with multiple reloads.

- the fans make some noise even with 0 load, so this is not a "desktop" router for the office [anyway who needs that much power at the deskside]

- surprisingly deep (ca. 10cms deeper than the other CCRs) and heavy

- internals look very clean (i still have no idea what to use the two SSDs for), i especially like the copper mikrotik logo on the PCB. something similar was hidden in the CRS125 under the heatsink.

- there is an internal s232 header on the board (/port print shows it)

- the power supplies are nice, easy to insert/remove, and i really like the cable clamps which keep the power cables from accidental fall/pull-out

- at last a full sized USB host port!

there are some negatives too:

- the touchscreen sometimes get laggy. but that's something that you see on all devices.

- informative stats display incorrect voltage (0V) even with both PS connected.

- power supplies are only connected with their output power feeds (12V) which it a very simple design but no extra information can be extracted from it (like: serial numbers, input status) so one cannot distinguish whether the PS breaks or the feed is disconnected

- i know, mactelnet is always there, but i'd like to have an USB console. say connect a cheap USB-B to Serial to the internal serial headers, route it out to the front to a type-B socket and you don't need that bulky serial adapters for your notebook.

if you really need forwarding power, this is an instant buy.

-

-

StubArea51

Trainer

- Posts: 1739

- Joined:

- Location: stubarea51.net

- Contact:

Re: Real CCR1072 experience?

Just an update from http://www.stubarea51.net:

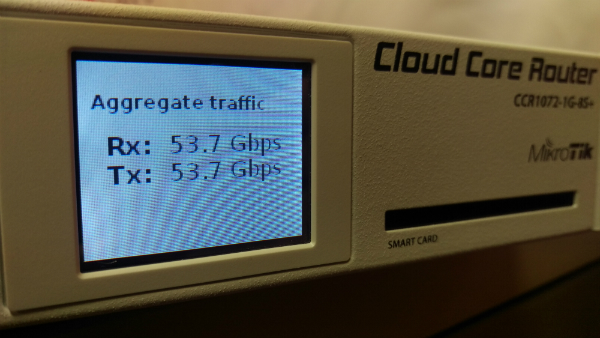

We are waiting on some more server capacity (which is on order) to be able to finish our performance testing. Unfortunately, we hit a CPU threshold on each of our ESXi servers at 27 Gbps per host, so we have only been able to push around 54 Gbps total through the CCR1072. This is definitely not an issue with 1072 capacity but just our ability to spin up enough performance on the VMs to push the full 80 gig. More servers are on the way into our lab and we will publish the full review as soon as we possibly can.

Here is a pic of the 1072 with the ESXi servers at full tilt

We are waiting on some more server capacity (which is on order) to be able to finish our performance testing. Unfortunately, we hit a CPU threshold on each of our ESXi servers at 27 Gbps per host, so we have only been able to push around 54 Gbps total through the CCR1072. This is definitely not an issue with 1072 capacity but just our ability to spin up enough performance on the VMs to push the full 80 gig. More servers are on the way into our lab and we will publish the full review as soon as we possibly can.

Here is a pic of the 1072 with the ESXi servers at full tilt

Re: Real CCR1072 experience?

Does it still suffer the 1 Gig per TCP Flow limitation the rest of the CCR lineup have ?

Re: Real CCR1072 experience?

That's a generic limitation, if it's lifted in the Gx, you will not only see it here.

Re: Real CCR1072 experience?

What do you mean by 1Gbit limit? There is no limit, not for this device, not for other CCRs.

Last time when this assumption/rumor surfaced, it was due to Bandwidt-test tool single core limitation, not CCR limitation.

Last time when this assumption/rumor surfaced, it was due to Bandwidt-test tool single core limitation, not CCR limitation.

Re: Real CCR1072 experience?

The 1 Gbps limit was described as a per CPU forwarding limitation. To get 10 Gbps of throughput, you couldn't just send a single 10 Gbps TCP flow between two ports - you needed to aggregate 10x 1 Gbps TCP flows so that multiple CPUs could get involved in the forwarding to provide the aggregate 10 Gbps performance. This was supposedly due to a single flow only being forwarded by a single CPU at any time and so it limited to the maximum forwarding performance of that CPU. As these are pretty weak individually in the CCR, the performance was limited to around 1 Gbps.

I don't recall seeing this being a traffic generator issue.

I don't recall seeing this being a traffic generator issue.

Re: Real CCR1072 experience?

Described by whom please? I am writing as official representative of MikroTik now, that there is, and never was such limitation.The 1 Gbps limit was described as a per CPU forwarding limitation. To get 10 Gbps of throughput, you couldn't just send a single 10 Gbps TCP flow between two ports - you needed to aggregate 10x 1 Gbps TCP flows so that multiple CPUs could get involved in the forwarding to provide the aggregate 10 Gbps performance. This was supposedly due to a single flow only being forwarded by a single CPU at any time and so it limited to the maximum forwarding performance of that CPU. As these are pretty weak individually in the CCR, the performance was limited to around 1 Gbps.

I don't recall seeing this being a traffic generator issue.

Re: Real CCR1072 experience?

I think if the bandwidth test tool could be improved a lot across devices it would be useful, as it seems to mislead quite a few people.

-

-

StubArea51

Trainer

- Posts: 1739

- Joined:

- Location: stubarea51.net

- Contact:

Re: Real CCR1072 experience?

I can confirm from our iperf testing over at http://www.stubarea51.net, that we have been able to get 10 Gbps in a single TCP stream using Jumbo MTU. Will post an example when I have a chance

Re: Real CCR1072 experience?

http://forum.mikrotik.com/viewtopic.php?f=1&t=85698Described by whom please? I am writing as official representative of MikroTik now, that there is, and never was such limitation.

http://forum.mikrotik.com/viewtopic.php ... 57#p461377

http://forum.mikrotik.com/viewtopic.php ... 02#p494480

http://forum.mikrotik.com/viewtopic.php ... 13#p460206

And a post about not getting more than 1 Gbps using the bandwidth test on the CCR:

http://forum.mikrotik.com/viewtopic.php ... 58#p449527

Re: Real CCR1072 experience?

I'm sorry, but most of these posts shows lack of TCP/IP understanding.

To calculate max theoretical single TCP connection speed you need to know.

1) RTT -Round trip time (how long does it take for packet to reach dst and ccome back

2) TCP window size - receive TCP buffer size on both endpoints (not the router)

3) MSS - maximal segment size - so in simple terms how big the packet is.

By default MSS is 1460 (with MTu 1500), TCP window size is 65535 bytes, and you can measure latency yourself with ping

You can use this calculator to try your values:

https://www.switch.ch/network/tools/tcp ... alculation

So in short TCP protocol itself have 3 bottlenecks, and you need manually improve/increase one of those values to get higher speed.

as previously posted by IPANetEngineer - set MTU to 9000 (MSS is 8960) and you can get 10G without problems.

To calculate max theoretical single TCP connection speed you need to know.

1) RTT -Round trip time (how long does it take for packet to reach dst and ccome back

2) TCP window size - receive TCP buffer size on both endpoints (not the router)

3) MSS - maximal segment size - so in simple terms how big the packet is.

By default MSS is 1460 (with MTu 1500), TCP window size is 65535 bytes, and you can measure latency yourself with ping

You can use this calculator to try your values:

https://www.switch.ch/network/tools/tcp ... alculation

So in short TCP protocol itself have 3 bottlenecks, and you need manually improve/increase one of those values to get higher speed.

as previously posted by IPANetEngineer - set MTU to 9000 (MSS is 8960) and you can get 10G without problems.

Re: Real CCR1072 experience?

If you put a 2 ms RTT (not unreasonable with a test port on each side of the DUT) into the calculator it gives a max throughput of ~ 58 Gbps at 1500/1460 bytes. Suggests that you don't need to tweak this at least. Might need to up the window size of the tester though (assuming it actually runs a TCP stack).

Re: Real CCR1072 experience?

OK then on your Both TCP endpoints you need:

to change TCP window size to 2,5MByte

cause with default TCP window size you will get max:

to change TCP window size to 2,5MByte

Code: Select all

required tcp buffer to reach 10000 Mbps with RTT of 2.0 ms >= 2441.4 KByte Code: Select all

maximum throughput with a TCP window of 64 KByte and RTT of 2.0 ms <= 262.14 Mbit/sec.-

-

StubArea51

Trainer

- Posts: 1739

- Joined:

- Location: stubarea51.net

- Contact:

Re: Real CCR1072 experience?

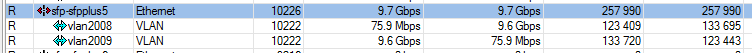

Technically this is true, but the reason for enabling Jumbo Frames/MTU is reduce the CPU overhead on the host and the router (if it is CPU based and not ASIC based)If you put a 2 ms RTT (not unreasonable with a test port on each side of the DUT) into the calculator it gives a max throughput of ~ 58 Gbps at 1500/1460 bytes. Suggests that you don't need to tweak this at least. Might need to up the window size of the tester though (assuming it actually runs a TCP stack).

It will take an enormous amount of CPU power to get to 58 Gbps with 1500 byte packets. We are topping out at 27 Gbps for TCP throughput on two XEON x5570 quad core processors with an MTU of 9000.

-

-

Jeroen1000

Member Candidate

- Posts: 202

- Joined:

Re: Real CCR1072 experience?

I want to add that this is the reason you have to verify how many packets per second a device can forward at a given packet size. CPU based systems do not behave linear opposed to ASICS.

Say you are have a 10 gigabit line at an ISP and the MTU is 1500, your device must be capable of forwarding the packets fast enough.

Say you are have a 10 gigabit line at an ISP and the MTU is 1500, your device must be capable of forwarding the packets fast enough.

-

-

StubArea51

Trainer

- Posts: 1739

- Joined:

- Location: stubarea51.net

- Contact:

Re: Real CCR1072 experience?

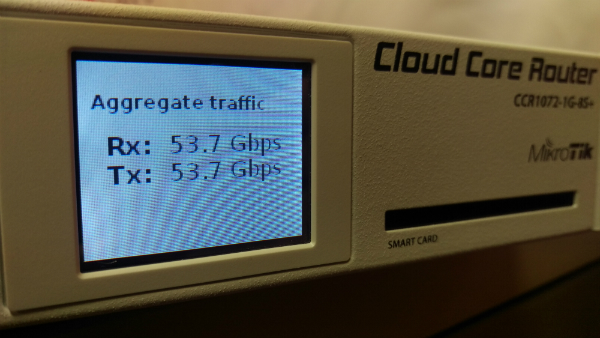

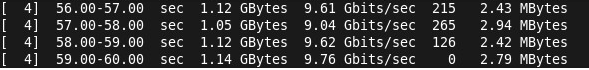

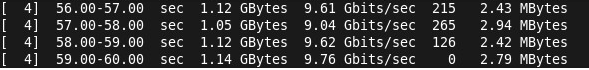

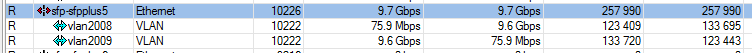

Here is an example of a 10 Gig single TCP stream with 9000 MTU going through the CCR1072 with the following specs:

Server: HP DL360 G6 (2 x Intel X5570 Quad Core)

Hypervisor: ESXi6.0

Guest OS: CentOS 6.6

Traffic Generator: iperf3

iperf single TCP stream

1072 Interface

Server: HP DL360 G6 (2 x Intel X5570 Quad Core)

Hypervisor: ESXi6.0

Guest OS: CentOS 6.6

Traffic Generator: iperf3

iperf single TCP stream

1072 Interface

-

-

FutileNetworks

newbie

- Posts: 36

- Joined:

Re: Real CCR1072 experience?

It would be more useful for us and probably others to know the PPS capabilities of these units, for example what PPS can be achieved via routed ports at 64/128/256/512 byte frame sizes, single 10GbE port to 10GbE port, with 5/10/20 firewall filter rules and also with bonded aggregated links, 20GbE to 20GbE routed and give CPU stats for each test.

Last edited by FutileNetworks on Mon Sep 21, 2015 1:43 am, edited 1 time in total.

Re: Real CCR1072 experience?

how many cpu usage on ccr1072 on that test??Here is an example of a 10 Gig single TCP stream with 9000 MTU going through the CCR1072 with the following specs:

Server: HP DL360 G6 (2 x Intel X5570 Quad Core)

Hypervisor: ESXi6.0

Guest OS: CentOS 6.6

Traffic Generator: iperf3

iperf single TCP stream

1072 Interface

cpu usage distribution on 72 cores?

configuration of ccr1072?

Re: Real CCR1072 experience?

I think this 1 gig limit was due to 1gbit of throughput between ports and the cpu on some models, like the 1009 and 1100

Re: Real CCR1072 experience?

Like I said before, there is no such limit. The BTest tool has limits, not the CCR. Other, low end devices, do have limits, but the ports of the CCR1072/1036/1016 are all directly connected to the CPU. The CCR1009 has the first 4 ports going through a switch chip, so that is partially true for that model.I think this 1 gig limit was due to 1gbit of throughput between ports and the cpu on some models, like the 1009 and 1100

Re: Real CCR1072 experience?

These tests have already been published:It would be more useful for us and probably others to know the PPS capabilities of these units, for example what PPS can be achieved via routed ports at 64/128/256/512 byte frame sizes, single 10GbE port to 10GbE port, with 5/10/20 firewall filter rules and also with bonded aggregated links, 20GbE to 20GbE routed and give CPU stats for each test.

http://routerboard.com/CCR1072-1G-8Splus#perf

-

-

StubArea51

Trainer

- Posts: 1739

- Joined:

- Location: stubarea51.net

- Contact:

Re: Real CCR1072 experience?

Here is the CPU distribution with one 10 gig up/down TCP stream running (20 gig aggregate IP forwarding)how many cpu usage on ccr1072 on that test??Here is an example of a 10 Gig single TCP stream with 9000 MTU going through the CCR1072 with the following specs:

Server: HP DL360 G6 (2 x Intel X5570 Quad Core)

Hypervisor: ESXi6.0

Guest OS: CentOS 6.6

Traffic Generator: iperf3

iperf single TCP stream

1072 Interface

cpu usage distribution on 72 cores?

configuration of ccr1072?

Code: Select all

[admin@IPA-LAB-CCR1072] > system resource cpu print

# CPU LOAD IRQ DISK

0 cpu0 0% 0% 0%

1 cpu1 6% 1% 0%

2 cpu2 0% 0% 0%

3 cpu3 0% 0% 0%

4 cpu4 0% 0% 0%

5 cpu5 0% 0% 0%

6 cpu6 0% 0% 0%

7 cpu7 0% 0% 0%

8 cpu8 0% 0% 0%

9 cpu9 0% 0% 0%

10 cpu10 0% 0% 0%

11 cpu11 0% 0% 0%

12 cpu12 32% 2% 0%

13 cpu13 0% 0% 0%

14 cpu14 5% 5% 0%

15 cpu15 0% 0% 0%

16 cpu16 0% 0% 0%

17 cpu17 0% 0% 0%

18 cpu18 0% 0% 0%

19 cpu19 0% 0% 0%

20 cpu20 0% 0% 0%

21 cpu21 0% 0% 0%

22 cpu22 0% 0% 0%

23 cpu23 0% 0% 0%

24 cpu24 0% 0% 0%

25 cpu25 0% 0% 0%

26 cpu26 0% 0% 0%

27 cpu27 0% 0% 0%

28 cpu28 0% 0% 0%

29 cpu29 0% 0% 0%

30 cpu30 0% 0% 0%

31 cpu31 0% 0% 0%

32 cpu32 0% 0% 0%

33 cpu33 0% 0% 0%

34 cpu34 0% 0% 0%

35 cpu35 0% 0% 0%

36 cpu36 0% 0% 0%

37 cpu37 0% 0% 0%

38 cpu38 0% 0% 0%

39 cpu39 21% 4% 0%

40 cpu40 0% 0% 0%

41 cpu41 0% 0% 0%

42 cpu42 0% 0% 0%

43 cpu43 0% 0% 0%

44 cpu44 0% 0% 0%

45 cpu45 0% 0% 0%

46 cpu46 0% 0% 0%

47 cpu47 0% 0% 0%

48 cpu48 0% 0% 0%

49 cpu49 0% 0% 0%

50 cpu50 0% 0% 0%

51 cpu51 0% 0% 0%

52 cpu52 0% 0% 0%

53 cpu53 0% 0% 0%

54 cpu54 0% 0% 0%

55 cpu55 0% 0% 0%

56 cpu56 36% 3% 0%

57 cpu57 0% 0% 0%

58 cpu58 0% 0% 0%

59 cpu59 0% 0% 0%

60 cpu60 0% 0% 0%

61 cpu61 0% 0% 0%

62 cpu62 0% 0% 0%

63 cpu63 0% 0% 0%

64 cpu64 0% 0% 0%

65 cpu65 0% 0% 0%

66 cpu66 0% 0% 0%

67 cpu67 0% 0% 0%

68 cpu68 0% 0% 0%

69 cpu69 0% 0% 0%

70 cpu70 0% 0% 0%

71 cpu71 0% 0% 0%

Re: Real CCR1072 experience?

Nice. Now to see the Mx with 100GbE.

Re: Real CCR1072 experience?

This is fixed in v6.33rc13.- informative stats display incorrect voltage (0V) even with both PS connected.

-

-

StubArea51

Trainer

- Posts: 1739

- Joined:

- Location: stubarea51.net

- Contact:

Re: Real CCR1072 experience?

Here is an update on our performance testing using the CCR1072-1G-8S+ with some throughput results:

http://www.stubarea51.net/2015/09/24/mi ... hroughput/

http://www.stubarea51.net/2015/09/24/mi ... hroughput/

Re: Real CCR1072 experience?

Ordered mine this week  Replacing our CCR1036 with it, will run 4 bgp feeds and multiple 10gbps interfaces.

Replacing our CCR1036 with it, will run 4 bgp feeds and multiple 10gbps interfaces.

-

-

SystemErrorMessage

Member

- Posts: 383

- Joined:

Re: Real CCR1072 experience?

While i wouldnt mind upgrading i dont really feel like getting a CCR1072 mainly because first i need to earn money and 2nd is that i feel like the quality of mikrotik is going down on the software side. They just arent that competitive anymore in software. Sure i could just buy the CCR1072 straight away but at the current pace of things for routerOS its really not helping to convince me to get one.

There has been another router that managed 100Gb/s of routing a few years ago but it was only a project and it didnt get that much spotlight. It was also probably cheaper than the CCR1072 for the solution. It was a GPU based router called pixelshader which used 2x GTX 480 and dual xeon CPU to support those speeds. Since you can get faster CPUs and GPUs for much cheaper now and with PCIe x16 3.0 and IGPs that also can run compute connected to the CPU bus the only limitation really is the network IO.

Idle power use on the CCRs are horrible compared to x86. Recent x86 CPUs can idle down to 10W while the CCR1036 idle power is 40W, but 47W since the fan always runs. x86 CPUs also have dynamic clock scaling which reduces heat and power use. So while the CCRs may be impressive they not only cost a lot but they use a lot of electricity. Dont Tilera based CPUs also have dynamic clocks and voltages? Although GPUs are power hungry recent GPUs have very very low idle power especially if you're only using them for compute and not connecting any monitors to them.

I have actually been in favor of using mikrotik for a few years and its just lacking the configurability you can get by using a normal x86 linux server. Even Ubiquiti which lacks features and speed compared to mikrotik can be used as a linux server since you can install debian packages on them.

There has been another router that managed 100Gb/s of routing a few years ago but it was only a project and it didnt get that much spotlight. It was also probably cheaper than the CCR1072 for the solution. It was a GPU based router called pixelshader which used 2x GTX 480 and dual xeon CPU to support those speeds. Since you can get faster CPUs and GPUs for much cheaper now and with PCIe x16 3.0 and IGPs that also can run compute connected to the CPU bus the only limitation really is the network IO.

Idle power use on the CCRs are horrible compared to x86. Recent x86 CPUs can idle down to 10W while the CCR1036 idle power is 40W, but 47W since the fan always runs. x86 CPUs also have dynamic clock scaling which reduces heat and power use. So while the CCRs may be impressive they not only cost a lot but they use a lot of electricity. Dont Tilera based CPUs also have dynamic clocks and voltages? Although GPUs are power hungry recent GPUs have very very low idle power especially if you're only using them for compute and not connecting any monitors to them.

I have actually been in favor of using mikrotik for a few years and its just lacking the configurability you can get by using a normal x86 linux server. Even Ubiquiti which lacks features and speed compared to mikrotik can be used as a linux server since you can install debian packages on them.

Re: Real CCR1072 experience?

i dont get your pointWhile i wouldnt mind upgrading i dont really feel like getting a CCR1072 mainly because first i need to earn money and 2nd is that i feel like the quality of mikrotik is going down on the software side. They just arent that competitive anymore in software. Sure i could just buy the CCR1072 straight away but at the current pace of things for routerOS its really not helping to convince me to get one.

There has been another router that managed 100Gb/s of routing a few years ago but it was only a project and it didnt get that much spotlight. It was also probably cheaper than the CCR1072 for the solution. It was a GPU based router called pixelshader which used 2x GTX 480 and dual xeon CPU to support those speeds. Since you can get faster CPUs and GPUs for much cheaper now and with PCIe x16 3.0 and IGPs that also can run compute connected to the CPU bus the only limitation really is the network IO.

Idle power use on the CCRs are horrible compared to x86. Recent x86 CPUs can idle down to 10W while the CCR1036 idle power is 40W, but 47W since the fan always runs. x86 CPUs also have dynamic clock scaling which reduces heat and power use. So while the CCRs may be impressive they not only cost a lot but they use a lot of electricity. Dont Tilera based CPUs also have dynamic clocks and voltages? Although GPUs are power hungry recent GPUs have very very low idle power especially if you're only using them for compute and not connecting any monitors to them.

I have actually been in favor of using mikrotik for a few years and its just lacking the configurability you can get by using a normal x86 linux server. Even Ubiquiti which lacks features and speed compared to mikrotik can be used as a linux server since you can install debian packages on them.

-

-

SystemErrorMessage

Member

- Posts: 383

- Joined:

Re: Real CCR1072 experience?

What im saying is that there are minor issues with routerOS that really irritate me that will never get fixed.

Other than that i was saying that this isnt the first inexpensive router to reach 100Gb/s. Packetshader is open sourced so the cost of it is only the hardware and because it is open sourced and runs linux which you can add programs and configure it is infinitely more useful than mikrotik's routerOS and routerboards.

I think i missed spelt the name of it, it is Packetshader.

http://shader.kaist.edu/packetshader/

a few years old but they reached 100Gb/s long before mikrotik. Ofcourse Cisco did reach such speeds before that using blade configuration edgerouters that slotted in and used high speed interconnects with many CPUs.

Whats quite impressive is that 100Gb/s can now be achieved for less than what mikrotik sells their CCR1072 for. If 10Gbe NICs are too expansive theres HDMI and other similar ports.

I do have a challenge for CCR1072 users which is to find the fastest speed they can achieve within the CCR072. This means that you use the test generator within the router without touching a physical interface. On the CCR1036 the highest i achieved was 70Gb/s internally which put the CPU architecture to it's limits.

Other than that i was saying that this isnt the first inexpensive router to reach 100Gb/s. Packetshader is open sourced so the cost of it is only the hardware and because it is open sourced and runs linux which you can add programs and configure it is infinitely more useful than mikrotik's routerOS and routerboards.

I think i missed spelt the name of it, it is Packetshader.

http://shader.kaist.edu/packetshader/

a few years old but they reached 100Gb/s long before mikrotik. Ofcourse Cisco did reach such speeds before that using blade configuration edgerouters that slotted in and used high speed interconnects with many CPUs.

Whats quite impressive is that 100Gb/s can now be achieved for less than what mikrotik sells their CCR1072 for. If 10Gbe NICs are too expansive theres HDMI and other similar ports.

I do have a challenge for CCR1072 users which is to find the fastest speed they can achieve within the CCR072. This means that you use the test generator within the router without touching a physical interface. On the CCR1036 the highest i achieved was 70Gb/s internally which put the CPU architecture to it's limits.

Re: Real CCR1072 experience?

you are comparing a whole appliance idle power with x86 cpu alone idle power, a x86 platform idle power is higher than 40watt

Idle power use on the CCRs are horrible compared to x86. Recent x86 CPUs can idle down to 10W while the CCR1036 idle power is 40W, but 47W since the fan always runs

of course we are not talking about x86 laptop platform or anemic atom processors

we are talking about an decent appliance like performing x86 platform, at least core i3 desktop processor with motherboard, ram, nic's, and power supply

if you want to buy parts and assemble a server to run a router congratulations

there is no correct or incorrect answer to the comparison between ccr1072 and a custom build server all depends of specific scenario.

about money each 10g nic cost a lot, putting 4 decent dual 10g nic on a x86 platform costs about 1500 us aprox, thats half the cost of ccr1072 only on interfaces.

Then you have to find a motherboard with 4 pciexpress x8 slots at x8 real speed (lga 2011 motherboard) that cost you about 300US and a 400us cpu (is the cheaper for that socket), 100us for ram, 100us for a decent 120gb ssd, 50US for a 400w 80plus power supply, 50us for a generic chassis

total 2500 US + the work selecting, buying, and assembling this and then installing the software troubleshooting drivers and platform specific details

idle power 80-100 watt of course if all its running ok between hardware and operating system

space occupied easily 2 to 4 rack units

Last edited by chechito on Fri Oct 02, 2015 2:39 am, edited 7 times in total.

-

-

SystemErrorMessage

Member

- Posts: 383

- Joined:

Re: Real CCR1072 experience?

Thats why theres HDMI. Some switches do use HDMI as a stacking interface. Its cheap and fast.

Infact i have used external PCIe slots connected to the laptop via mini HDMI cables. For thunderbolt you can just use the thunderbolt cable.

Thunderbolt is an option too since it is a 10Gb/s interface using 4 PCIe lanes.

Infact i have used external PCIe slots connected to the laptop via mini HDMI cables. For thunderbolt you can just use the thunderbolt cable.

Thunderbolt is an option too since it is a 10Gb/s interface using 4 PCIe lanes.

-

-

StubArea51

Trainer

- Posts: 1739

- Joined:

- Location: stubarea51.net

- Contact:

Re: Real CCR1072 experience?

We finally hit 80 Gbps in the stubare51.net lab...details, videos and config are here!!!

http://www.stubarea51.net/2015/10/09/mi ... t-testing/

http://www.stubarea51.net/2015/10/09/mi ... t-testing/

Re: Real CCR1072 experience?

Only problem is that a iPerf, doesn't really reflect 'real' experience...

I bet adding a few nat / firewall rules to the mix, and the performance will drop -significantly-

I bet adding a few nat / firewall rules to the mix, and the performance will drop -significantly-

-

-

StubArea51

Trainer

- Posts: 1739

- Joined:

- Location: stubarea51.net

- Contact:

Re: Real CCR1072 experience?

It largely depends on the way in which you deploy it. At a data center that deals with big data, moving around large volumes of tcp traffic for an extended period of time is very normal.

The mechanics of iperf on linux forming a three way TCP handshake are no different than that of a user requesting a web page, except the traffic is smaller and more bursty. That too can be scripted and replicated into iperf, it's a matter time and energy ultimately if you to replicate traffic patterns that are more consistent with enterprise users or an ISP.

Now that we have a massive amount of bandwidth on demand in our lab and almost unlimited VM capability, we plan to do testing using various services like MPLS, VPLS, NAT, PPPoE, etc and publish it as time permits, so stay tuned

The mechanics of iperf on linux forming a three way TCP handshake are no different than that of a user requesting a web page, except the traffic is smaller and more bursty. That too can be scripted and replicated into iperf, it's a matter time and energy ultimately if you to replicate traffic patterns that are more consistent with enterprise users or an ISP.

Now that we have a massive amount of bandwidth on demand in our lab and almost unlimited VM capability, we plan to do testing using various services like MPLS, VPLS, NAT, PPPoE, etc and publish it as time permits, so stay tuned

Re: Real CCR1072 experience?

Only problem is that a iPerf, doesn't really reflect 'real' experience...

I bet adding a few nat / firewall rules to the mix, and the performance will drop -significantly-

off course, but for 3000us the results its awesome

one think its clear, the machine and its architecture its capable of fulling the interfaces, good start of the testing

Re: Real CCR1072 experience?

Hopefully we soon see fastpath tx/rx on bonding interfaces.

All our CCR deployments use bonding so not having fastpath support is really limiting us.

All our CCR deployments use bonding so not having fastpath support is really limiting us.

-

-

StubArea51

Trainer

- Posts: 1739

- Joined:

- Location: stubarea51.net

- Contact:

Re: Real CCR1072 experience?

Agreed...it would be great to fastpath for LACP. I will say based on the testing we did, I don't think the 1072 will need Fastpath to get to full capacity with boning interfaces, it's just going to be more efficient with CPU. Once we have a 10 gig switch in the lab, i'll probably do some more testing with bonding and throughput.

-

-

roc-noc.com

Forum Veteran

- Posts: 874

- Joined:

- Location: Rockford, IL USA

- Contact:

Re: Real CCR1072 experience?

Nice reading guys. Love the comprehensive tests that Kevin and IP Architechs have done.

Sorry to hijack the thread but I am wondering what CPU frequency people are using with the CCR1072?

The default as shipped is 1GHz. Overclocking is supported at 1.2GHz. (1200MHz).

Do any of you have experience running this with load overclocked to 1.2GHz?

Thanks, Tom

Sorry to hijack the thread but I am wondering what CPU frequency people are using with the CCR1072?

The default as shipped is 1GHz. Overclocking is supported at 1.2GHz. (1200MHz).

Do any of you have experience running this with load overclocked to 1.2GHz?

Thanks, Tom

-

-

StubArea51

Trainer

- Posts: 1739

- Joined:

- Location: stubarea51.net

- Contact:

Re: Real CCR1072 experience?

Thanks Tom!

We haven't tried it in overclock mode yet, but I'll add that to the list of tests we are doing.

Just recently we got the CCR1072 to 30,000 PPPoE active connectons with 30,000 simple queues.

We haven't tried it in overclock mode yet, but I'll add that to the list of tests we are doing.

Just recently we got the CCR1072 to 30,000 PPPoE active connectons with 30,000 simple queues.

Re: Real CCR1072 experience?

Dears,

I have made a real life test, the only problem with CCR1072 and PPPoE with 3 simple queues per subscriber is when traffic starts to flow.

Once the CPU load hits >13-15% BW drops, which leads to CPU load to go down again, this issue occur repeatedly until you reboot the router then it is stable again for another 6-10 hrs! then the cpu load starts to get unstable again.

I have the router in a real life situation now, with ~1400 users online and ~670Mbps at peak!

Best regards,

Layth

I have made a real life test, the only problem with CCR1072 and PPPoE with 3 simple queues per subscriber is when traffic starts to flow.

Once the CPU load hits >13-15% BW drops, which leads to CPU load to go down again, this issue occur repeatedly until you reboot the router then it is stable again for another 6-10 hrs! then the cpu load starts to get unstable again.

I have the router in a real life situation now, with ~1400 users online and ~670Mbps at peak!

Best regards,

Layth

Thanks Tom!

We haven't tried it in overclock mode yet, but I'll add that to the list of tests we are doing.

Just recently we got the CCR1072 to 30,000 PPPoE active connectons with 30,000 simple queues.

Re: Real CCR1072 experience?

Dears,

I have made a real life test, the only problem with CCR1072 and PPPoE with 3 simple queues per subscriber is when traffic starts to flow.

Once the CPU load hits >13-15% BW drops, which leads to CPU load to go down again, this issue occur repeatedly until you reboot the router then it is stable again for another 6-10 hrs! then the cpu load starts to get unstable again.

I have the router in a real life situation now, with ~1400 users online and ~670Mbps at peak!

Best regards,

Layth

Are you un latest version? what are CPU core load distributions? Does all your queues run under a single (or few) parent queues.

have you contacted support@mikrotik.com?

Re: Real CCR1072 experience?

I am on the latest version yes,

CPU load is distributed as it should apparently

All queues run under a single parent

I haven't contacted support because I have a history without a single success!

Best regards,

Layth

CPU load is distributed as it should apparently

All queues run under a single parent

I haven't contacted support because I have a history without a single success!

Best regards,

Layth

Dears,

I have made a real life test, the only problem with CCR1072 and PPPoE with 3 simple queues per subscriber is when traffic starts to flow.

Once the CPU load hits >13-15% BW drops, which leads to CPU load to go down again, this issue occur repeatedly until you reboot the router then it is stable again for another 6-10 hrs! then the cpu load starts to get unstable again.

I have the router in a real life situation now, with ~1400 users online and ~670Mbps at peak!

Best regards,

Layth

Are you un latest version? what are CPU core load distributions? Does all your queues run under a single (or few) parent queues.

have you contacted support@mikrotik.com?

Re: Real CCR1072 experience?

So all your queues are limited to single cpu core then, most probable place of bottleneck.All queues run under a single parent

Sad! I have completely opposite observations - compare to other IT companies one of the best support out there. Try submitting something to CiscoI haven't contacted support because I have a history without a single success!

Re: Real CCR1072 experience?

I use simple queues with parent queue set to none, there is no queueing as much as traffic shaping with PCQ.

I wish I have your success story with the support, but unfortunately I haven't.

I wish I have your success story with the support, but unfortunately I haven't.

So all your queues are limited to single cpu core then, most probable place of bottleneck.All queues run under a single parent

Sad! I have completely opposite observations - compare to other IT companies one of the best support out there. Try submitting something to CiscoI haven't contacted support because I have a history without a single success!

Re: Real CCR1072 experience?

We're in the same boat, but with the 1036 CCR and only running 500 queues. All PCQ with "no parent" and barely able to push 600Mbps. Is there an official way to run queuing with many hundreds of clients?I use simple queues with parent queue set to none, there is no queueing as much as traffic shaping with PCQ.

I wish I have your success story with the support, but unfortunately I haven't.

Re: Real CCR1072 experience?

Simple Queues, not queue trees.

But simple queues too, have issues if frequent changes to those queues are made.

But simple queues too, have issues if frequent changes to those queues are made.

Re: Real CCR1072 experience?

We're using simple queues. What do you mean by frequent changes? We might modify a few queues a day on the list, but I can't imagine a few changes making an impact that lasts hours...Simple Queues, not queue trees.

But simple queues too, have issues if frequent changes to those queues are made.

Re: Real CCR1072 experience?

ROS 6.35.2 on ccr1072 is there problem, LCD does not working, whole time is screen empty / white

Re: Real CCR1072 experience?

My Advice is DONT buy a ccr1072, love mikrotik but now on my second ccr1072 both have interface issues. Cannot be used in critical environments like data centers!

Re: Real CCR1072 experience?

CCR1072 has a fundamental watchdog reboot flaw.

Check out this thread and be careful !

viewtopic.php?f=3&t=122525&start=50

This is the first mikrotik product that we hate to use. The downtime and the damage it has done to our uptime/reputation is unbelievable.

Will be switching to a CHR or some other vendor for a Core Router.

Check out this thread and be careful !

viewtopic.php?f=3&t=122525&start=50

This is the first mikrotik product that we hate to use. The downtime and the damage it has done to our uptime/reputation is unbelievable.

Will be switching to a CHR or some other vendor for a Core Router.

Who is online

Users browsing this forum: No registered users and 30 guests