CHR perfomance with vmware

Is it any perfomance tests for CHR working on vmware? I am trying to get 10gbps with simple routing + nat load balancing (per connection classifier feature) and I cannot get more than 5gbps. I use 8 core CHR ver 7.6-7.8 with vmxnet3 network cards at esxi 7. I don't see cpu bottleneck (no CHR cores with 100% usage) but when traffic is getting to 5gbps I see huge traffic drops down to 100-200mbit/s every 2-3sec. What is wrong?

Re: CHR perfomance with vmware

have you tried pci passthrough of the NICs to the CHR? (if the ESXi host CPU supports it)Is it any perfomance tests for CHR working on vmware? I am trying to get 10gbps with simple routing + nat load balancing (per connection classifier feature) and I cannot get more than 5gbps. I use 8 core CHR ver 7.6-7.8 with vmxnet3 network cards at esxi 7. I don't see cpu bottleneck (no CHR cores with 100% usage) but when traffic is getting to 5gbps I see huge traffic drops down to 100-200mbit/s every 2-3sec. What is wrong?

-

-

TomjNorthIdaho

Forum Guru

- Posts: 1493

- Joined:

- Location: North Idaho

- Contact:

Re: CHR perfomance with vmware

I have several VmWare ESXi ( with physical 10-Gig network interfaces ) servers running several Mikrotik CHR routers ( with vmxnet3 network interfaces ). When I perform a Mikrotik CHR btest between my CHRs from one VmWare ESXi server to a different VmWare ESXi server across the 10-Gig physical network , I am always able to hit near 10-GIg with my btest(s).Is it any perfomance tests for CHR working on vmware? I am trying to get 10gbps with simple routing + nat load balancing (per connection classifier feature) and I cannot get more than 5gbps. I use 8 core CHR ver 7.6-7.8 with vmxnet3 network cards at esxi 7. I don't see cpu bottleneck (no CHR cores with 100% usage) but when traffic is getting to 5gbps I see huge traffic drops down to 100-200mbit/s every 2-3sec. What is wrong?

You might want to check some of your configurations:

- On your CHRs ; are you running the P unlimited license ?

- If you have more than one VmWare ESXi server , you might want to consider setting delayed_ack = 1

- Consider disabling hyper-threading. Hyper-Threading makes a CPU appear as if it has 2x more cores at the expense of each core running a little slower.

- With Hyper-Threading disabled in your BIOS , the total of all virtual machine CPUs added up together ( TOTAL assigned CPUs ) should be at a minimum of one less than your physical cores.

I use Intel Xeon CPUs running at 3-GHz or faster on my VmWare ESXi servers to get my CHRs to run their fastest.

Re: ... perfomance tests for CHR working on vmware ...

On your CHR , perform a btest to 127.0.0.1

127.0.0.1 is a local interface on the CHR server.

On one of my slowest VmWare ESXi servers ( Xeon 2-something GHz with Hyper-Threading ) running a CHR doing a btest to 127.0.0.1 , I get over 200-GIg.

EDIT - NOTE ; I am planning on upgrading all of my VmWare ESXi servers from 10-GIg to 100-Gig physical network interfaces to that I can use faster than 10-Gig routes to/through my CHRs.

North Idaho Tom Jones

You do not have the required permissions to view the files attached to this post.

Re: CHR perfomance with vmware

No. How can I configure it?have you tried pci passthrough of the NICs to the CHR? (if the ESXi host CPU supports it)

Re: CHR perfomance with vmware

I have almost the same results when I perfom CHR btest between my esxi servers. That's why I think the issue may be related to nat or routing.have several VmWare ESXi ( with physical 10-Gig network interfaces ) servers running several Mikrotik CHR routers ( with vmxnet3 network interfaces ). When I perform a Mikrotik CHR btest between my CHRs from one VmWare ESXi server to a different VmWare ESXi server across the 10-Gig physical network , I am always able to hit near 10-GIg with my btest(s).

I use P10 license.- On your CHRs ; are you running the P unlimited license ?

Where and how can I configure it?- If you have more than one VmWare ESXi server , you might want to consider setting delayed_ack = 1

I tried it and didn't notice any significant changes.- Consider disabling hyper-threading.

I use Xeon CPUs with basic freq 2.1-2.8Ghz and turbo boost freq up to 3.9Ghz.I use Intel Xeon CPUs running at 3-GHz or faster on my VmWare ESXi servers to get my CHRs to run their fastest.

I am getting 250-300Gbit/s for such tests.On your CHR , perform a btest to 127.0.0.1

127.0.0.1 is a local interface on the CHR server.

On one of my slowest VmWare ESXi servers ( Xeon 2-something GHz with Hyper-Threading ) running a CHR doing a btest to 127.0.0.1 , I get over 200-GIg.

Re: CHR perfomance with vmware

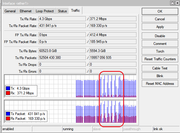

This is how it looks when I remove speed limit:

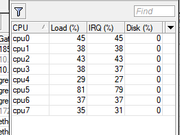

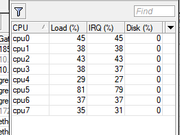

CPU usage during this time:

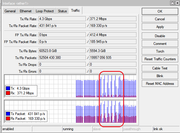

So I have to use queues to limit speed to 4-5 gbps and for getting stable bandwidth without huge drops.

CPU usage during this time:

So I have to use queues to limit speed to 4-5 gbps and for getting stable bandwidth without huge drops.

Last edited by xeonz on Tue Mar 28, 2023 12:55 pm, edited 2 times in total.

Re: CHR perfomance with vmware

I have ether1 with multiple IPs (5-10) and these mange/nat rules:

Just simple destination nat config with per connection classifier for load balancing. No other features are used, just load balancing traffic to three destination IPs. Two of these destinations are at the same vmware host, the 3rd is at another vmware host. All vmware hosts use 10gbps network cards and vmxnet3 network adapters for CHR (and other virtual machines). The issue was the same even if there were only two destinations located at the same vmware host. Vmware host shows only 4-5gbps is in use (no vmware network bottleneck).

Code: Select all

/ip firewall filter add action=reject chain=forward connection-state=new dst-port=25,2525,465,587,139,445 log-prefix=smtp-block protocol=tcp reject-with=icmp-admin-prohibited

/ip firewall mangle add action=mark-connection chain=prerouting connection-state=new dst-address=!XXX.XXX.XXX.168 in-interface=ether1 new-connection-mark=PCC-CA1 passthrough=no per-connection-

classifier=src-address:3/0 src-address=!XXX.XXX.XXX.0/24

/ip firewall mangle add action=mark-connection chain=prerouting connection-state=new dst-address=!XXX.XXX.XXX.168 in-interface=ether1 new-connection-mark=PCC-CA2 passthrough=no per-connection-

classifier=src-address:3/1 src-address=!XXX.XXX.XXX.0/24

/ip firewall mangle add action=mark-connection chain=prerouting connection-state=new dst-address=!XXX.XXX.XXX.168 in-interface=ether1 new-connection-mark=PCC-CA3 passthrough=no per-connection-

classifier=src-address:3/2 src-address=!XXX.XXX.XXX.0/24

/ip firewall nat add action=dst-nat chain=dstnat comment="PCC dst-nat -> nl5-v50-ca1" connection-mark=PCC-CA1 dst-address=!XXX.XXX.XXX.168 in-interface=ether1 to-addresses=XXX.XXX.XXX.148

/ip firewall nat add action=dst-nat chain=dstnat comment="PCC dst-nat -> nl5-v50-ca2" connection-mark=PCC-CA2 dst-address=!XXX.XXX.XXX.168 in-interface=ether1 to-addresses=XXX.XXX.XXX.145

/ip firewall nat add action=dst-nat chain=dstnat comment="PCC dst-nat -> nl14-v50-ca3" connection-mark=PCC-CA3 dst-address=!XXX.XXX.XXX.168 in-interface=ether1 to-addresses=YYY.YYY.YYY.18

/ip firewall nat add action=dst-nat chain=dstnat comment="dst-nat -> nl5-v50-ca1" dst-address=!XXX.XXX.XXX.168 in-interface=ether1 to-addresses=XXX.XXX.XXX.148

/ip firewall nat add action=masquerade chain=srcnat out-interface=ether1

Re: CHR perfomance with vmware

To rule out that your local vmware environment is not the culprit, create a couple of test instances and make sure sr-iov is in place. Apply for a couple of free 60 days p10 test licenses and run carefully engineered tests. Before you start, verify the test equipment is working correctly by hooking them up directly to each other. If you still get poor throughput during the tests, it's time to systematically troubleshoot the root cause.

-

-

TomjNorthIdaho

Forum Guru

- Posts: 1493

- Joined:

- Location: North Idaho

- Contact:

Re: CHR perfomance with vmware

xeonz ,

FYI ,

One of the primary reasons I have so many CHRs ( all with P unlimited license ) is because I run a KISS network ( Keep It Simple Stupid ).

None of my CHR routers are do-everything routers. Each CHR router is dedicated to one simple unique function.

Example of how I use my CHR routers :

- CHR #1 & $2 ; BGP

- CHR #2 ; OSPF and core router

- ChR #3 ; NAT-444 ( NAT444 router -- Not normal NAT or normal NAT44 )

QTY 8 Live IPs gives me a /21 CGN-NAT ( NAT444 ) - Where every residential customer gets 256 ports from a Live IP address when is then delivered to a 100.64.x.x WAN IP address to a residential customer ((((( Much much much faster than normal NAT or NAT44 )))))

- CHR #4 ; Customer bandwidth manager ( and http redirection to our web site when customer account is past-due ).

- CHR #5 ; Distribution router ( my ISP NAT444 networks to customers ).

- CHR #6 ; DHCP server.

I have a dozen different remote zones ( fiber in some locations and wireless in other locations. Each location gets it own unique CGN network ( CHRs 3a, 4a, 5a , 6a then CHRs 3b, 4b, 5b , 6b).

I also have another set of CHRs on other VmWare ESXi server for business customers who use Live IP addresses.

CHRs #1 & #2 are on one dedicated VmWare ESXi server

CHRs # 3,4,5,6 are on other dedicated VmWare ESXi servers.

Non CHR related virtual machines are on other VmWare ESXi servers.

During thousands of customer peak Internet bandwidth ( around 9 to 14-Gig ) I might hit 20% CHR CPU load on some CHRs and 1-percent on other CHRs.

If something breaks , it's simple to fix because the CHR configurations are simple.

FYI - note: I was originally using normal NAT ( aka NAT44 ) , when I went to NAT444 , everything got 50-percent faster.

EDIT - Note: I use delayed_ack = 1 on all of my VmWare ESXi servers because they are located next to each other with reliable high-speed links. This delayed_ack setting will also improve network throughput between VmWare ESXi servers and remote sites. Also , my NOC servers perform all Layer-3+ functions , after it leaves the NOC , everything is Layer-2 to the WAN interface on the customer owned router.

FYI ,

One of the primary reasons I have so many CHRs ( all with P unlimited license ) is because I run a KISS network ( Keep It Simple Stupid ).

None of my CHR routers are do-everything routers. Each CHR router is dedicated to one simple unique function.

Example of how I use my CHR routers :

- CHR #1 & $2 ; BGP

- CHR #2 ; OSPF and core router

- ChR #3 ; NAT-444 ( NAT444 router -- Not normal NAT or normal NAT44 )

QTY 8 Live IPs gives me a /21 CGN-NAT ( NAT444 ) - Where every residential customer gets 256 ports from a Live IP address when is then delivered to a 100.64.x.x WAN IP address to a residential customer ((((( Much much much faster than normal NAT or NAT44 )))))

- CHR #4 ; Customer bandwidth manager ( and http redirection to our web site when customer account is past-due ).

- CHR #5 ; Distribution router ( my ISP NAT444 networks to customers ).

- CHR #6 ; DHCP server.

I have a dozen different remote zones ( fiber in some locations and wireless in other locations. Each location gets it own unique CGN network ( CHRs 3a, 4a, 5a , 6a then CHRs 3b, 4b, 5b , 6b).

I also have another set of CHRs on other VmWare ESXi server for business customers who use Live IP addresses.

CHRs #1 & #2 are on one dedicated VmWare ESXi server

CHRs # 3,4,5,6 are on other dedicated VmWare ESXi servers.

Non CHR related virtual machines are on other VmWare ESXi servers.

During thousands of customer peak Internet bandwidth ( around 9 to 14-Gig ) I might hit 20% CHR CPU load on some CHRs and 1-percent on other CHRs.

If something breaks , it's simple to fix because the CHR configurations are simple.

FYI - note: I was originally using normal NAT ( aka NAT44 ) , when I went to NAT444 , everything got 50-percent faster.

EDIT - Note: I use delayed_ack = 1 on all of my VmWare ESXi servers because they are located next to each other with reliable high-speed links. This delayed_ack setting will also improve network throughput between VmWare ESXi servers and remote sites. Also , my NOC servers perform all Layer-3+ functions , after it leaves the NOC , everything is Layer-2 to the WAN interface on the customer owned router.

-

-

TomjNorthIdaho

Forum Guru

- Posts: 1493

- Joined:

- Location: North Idaho

- Contact:

Re: CHR perfomance with vmware

re: - If you have more than one VmWare ESXi server , you might want to consider setting delayed_ack = 1

re: Where and how can I configure it?

to enable delayed_ack = 1

ssh into your VmWare ESXi server

type in this cli command ---> vsish -e set /net/tcpip/instances/defaultTcpipStack/sysctl/_net_inet_tcp_delayed_ack 1

*** This sets your VmWare ESXi server to use delayed_ack=1

For delayed_ack=1 to survive a reboot

edit ( vi ) this file --> /etc/rc.local.d/local.sh

in the file above the exit line , insert --> vsish -e set /net/tcpip/instances/defaultTcpipStack/sysctl/_net_inet_tcp_delayed_ack 1

*** Now when VmWare ESXi reboots , it will auto-configure delayed_ack=1

To disable delayed_ack=1

type in this command --> vsish -e set /net/tcpip/instances/defaultTcpipStack/sysctl/_net_inet_tcp_delayed_ack 0

and remove the line in the file --> /etc/rc.local.d/local.sh

Summary - without saving any settings , you can do this at the CLI prompt:

On two different VmWare ESXi servers that communicate to each other , do this on both:

vsish -e set /net/tcpip/instances/defaultTcpipStack/sysctl/_net_inet_tcp_delayed_ack 1

If you are not satisfied

then on your VmWare ESXi servers , type in --> vsish -e set /net/tcpip/instances/defaultTcpipStack/sysctl/_net_inet_tcp_delayed_ack 0

re: Where and how can I configure it?

to enable delayed_ack = 1

ssh into your VmWare ESXi server

type in this cli command ---> vsish -e set /net/tcpip/instances/defaultTcpipStack/sysctl/_net_inet_tcp_delayed_ack 1

*** This sets your VmWare ESXi server to use delayed_ack=1

For delayed_ack=1 to survive a reboot

edit ( vi ) this file --> /etc/rc.local.d/local.sh

in the file above the exit line , insert --> vsish -e set /net/tcpip/instances/defaultTcpipStack/sysctl/_net_inet_tcp_delayed_ack 1

*** Now when VmWare ESXi reboots , it will auto-configure delayed_ack=1

To disable delayed_ack=1

type in this command --> vsish -e set /net/tcpip/instances/defaultTcpipStack/sysctl/_net_inet_tcp_delayed_ack 0

and remove the line in the file --> /etc/rc.local.d/local.sh

Summary - without saving any settings , you can do this at the CLI prompt:

On two different VmWare ESXi servers that communicate to each other , do this on both:

vsish -e set /net/tcpip/instances/defaultTcpipStack/sysctl/_net_inet_tcp_delayed_ack 1

If you are not satisfied

then on your VmWare ESXi servers , type in --> vsish -e set /net/tcpip/instances/defaultTcpipStack/sysctl/_net_inet_tcp_delayed_ack 0

Re: CHR perfomance with vmware

When I perform btest between two CHR I get nearly 10gbps results. So I think there is no problem at vmware level. In those tests no nat is in use, that's why I think the isssue may be in nat. I am going to make tests over nat, but it requires to a build special test environment.To rule out that your local vmware environment is not the culprit, create a couple of test instances and make sure sr-iov is in place. Apply for a couple of free 60 days p10 test licenses and run carefully engineered tests. Before you start, verify the test equipment is working correctly by hooking them up directly to each other. If you still get poor throughput during the tests, it's time to systematically troubleshoot the root cause.

Re: CHR perfomance with vmware

By the way, 70-90% of our prod traffic is udp. We are running DTLS protocol at our target virtual machines which is udp based protocol. And CHRs are used as nat load balancers. As I know, delayed_ack is related to TCP only.re: - If you have more than one VmWare ESXi server , you might want to consider setting delayed_ack = 1

re: Where and how can I configure it?

to enable delayed_ack = 1

Re: CHR perfomance with vmware

Well, I made some tests within single vmware host and 3 CHRs. 1st CHR was btest client, which was sending tcp or udp data. 2nd was nat load balancer with per-connection classifier mange rules. 3rd was just btest server with two IPs as load-balance nat destionation addresses.

In tcp mode I got no problems with speed - 8-9gbps (in both cases - with and without nat load-balancing).

In udp mode I got not stable and low speeds: sometimes 2-5gbps, sometimes 0.1-1gbps. As soon as I'd removed mange rules for marking connections I got good speed 8-9gbps (after restarting test).

So something wrong with mangle marking rules for udp traffic.

These are my rules at CHR2 (load-balancing CHR):

In tcp mode I got no problems with speed - 8-9gbps (in both cases - with and without nat load-balancing).

In udp mode I got not stable and low speeds: sometimes 2-5gbps, sometimes 0.1-1gbps. As soon as I'd removed mange rules for marking connections I got good speed 8-9gbps (after restarting test).

So something wrong with mangle marking rules for udp traffic.

These are my rules at CHR2 (load-balancing CHR):

Code: Select all

/ip firewall mangle

add action=mark-connection chain=prerouting connection-mark=no-mark connection-state=new dst-address=XXX.XXX.XXX.58 in-interface=ether1 new-connection-mark=pcc1 passthrough=no per-connection-classifier=src-address-and-port:2/0

add action=mark-connection chain=prerouting connection-mark=no-mark connection-state=new dst-address=XXX.XXX.XXX.58 in-interface=ether1 new-connection-mark=pcc2 passthrough=no per-connection-classifier=src-address-and-port:2/1

/ip firewall nat

add action=dst-nat chain=dstnat comment=pcc1 connection-mark=pcc1 dst-address=XXX.XXX.XXX.58 in-interface=ether1 to-addresses=XXX.XXX.XXX.177

add action=dst-nat chain=dstnat comment=pcc2 connection-mark=pcc2 dst-address=XXX.XXX.XXX.58 in-interface=ether1 to-addresses=XXX.XXX.XXX.178

add action=dst-nat chain=dstnat comment="no pcc" dst-address=XXX.XXX.XXX.58 in-interface=ether1 to-addresses=XXX.XXX.XXX.177

add action=src-nat chain=srcnat out-interface=ether1 to-addresses=XXX.XXX.XXX.165

Re: CHR perfomance with vmware

Regarding the UDP tests:

- how is the test performed?

- how are the cpus doing?

- is throughput the same both ways?

- does packet size affect throughput?

- with and without pcc/lb?

- how is the test performed?

- how are the cpus doing?

- is throughput the same both ways?

- does packet size affect throughput?

- with and without pcc/lb?

Re: CHR perfomance with vmware

I have an update with this case. No config or traffic changes, just another vmware host with mikrotik CHR 7.8 with "P Unlimited" trial license. And now I have different results. Total traffic the CHR can handle is 6.5gbps and one core of 8 is 100% utilized. Is it any ideas why only one core is utilized up to 100% and how it can be fixed?

Re: CHR perfomance with vmware

ROS will handle all packets it deems to belong to same connection with same CPU core ... to ensure it doesn't change order of packets. So in usual scenario, where devices acts as (stateful) firewall and runs connection tracking machinery, any connection (be it TCP or UDP) between a pair of peers, will be handled by single CPU core. If one runs test in multiple parallel streams (e.g. iperf3 using parameter -P n), the load will spread over multiple CPU cores.

I guess that if device doesn't have any firewall rule enabled and can thus disable connection tracking machinery, it may use all CPU cores even for handling packets of single connection ... because then it does not have any notion of connections. Which could mean that some packet re-ordering might happen (which, BTW, kills performance of many of TCP stacks and upsets many applications which use UDP).

I guess that if device doesn't have any firewall rule enabled and can thus disable connection tracking machinery, it may use all CPU cores even for handling packets of single connection ... because then it does not have any notion of connections. Which could mean that some packet re-ordering might happen (which, BTW, kills performance of many of TCP stacks and upsets many applications which use UDP).

Re: CHR perfomance with vmware

Can I disable connection tracking feature if I use it for per-connection classifier with nat load-balancing? I thought I can not.

Also I have thousands of connections in my real user traffic and the last screen is for real traffic, not any perfomance tests like btest or iperf.

Also I have thousands of connections in my real user traffic and the last screen is for real traffic, not any perfomance tests like btest or iperf.

Re: CHR perfomance with vmware

if you mean fast-tracking yes... all QoS relevant connections/packets must be fasttrack disabledCan I disable connection tracking feature if I use it for per-connection classifier with nat load-balancing? I thought I can not.

Also I have thousands of connections in my real user traffic and the last screen is for real traffic, not any perfomance tests like btest or iperf.

otherwise queue/qos engine won't "see" them any further

Re: CHR perfomance with vmware

I don't need queues/qos if CHR can handle 10gbps. But I though connection tracking is used for "per connection classifier" feature which I am using for nat load-balancing.