Cannot dial out wifi-call from mobile phone

I am not able to dial out wifi-call from mobile phone with a layer 2 AP connect to RB4011 with details:

routerboard: yes

model: RB4011iGS+

revision: r2

serial-number: xxxxxxxxxxxxxx

firmware-type: al2

factory-firmware: 6.47.9

current-firmware: 7.1

upgrade-firmware: 7.1

Phone A connected to the wifi and dial a call out, Phone B with no wifi will ring and can pick up the call but without sound.

Even Phone B pick the call, Phone A still hear the dial tone just like no one pick the call.

Any help?

routerboard: yes

model: RB4011iGS+

revision: r2

serial-number: xxxxxxxxxxxxxx

firmware-type: al2

factory-firmware: 6.47.9

current-firmware: 7.1

upgrade-firmware: 7.1

Phone A connected to the wifi and dial a call out, Phone B with no wifi will ring and can pick up the call but without sound.

Even Phone B pick the call, Phone A still hear the dial tone just like no one pick the call.

Any help?

Re: Cannot dial out wifi-call from mobile phone

When talking about "wifi call", do you have in mind the "WiFi calling" or "VoWiFi" service (same thing, different marketing names) provided by the mobile operator, or some IP phone application where an app on the smartphone registers with some other account not related to the mobile operator?

Re: Cannot dial out wifi-call from mobile phone

My issue is just concerning the WiFi Call service provided by the mobile operator.

Any suggestion?

Any suggestion?

-

-

gotsprings

Forum Guru

- Posts: 2122

- Joined:

Re: Cannot dial out wifi-call from mobile phone

My cellular service outside the house is "ok".

Inside the house it's like 1 bar.

So I have wifi calling turned on, in my phone. As soon as I get in range of my wifi my phone flips to wifi calling. I work from home most days and make about 40 calls per day. They all show as over wifi...

Getting to the point.

If I look at my phone and pull down from the top (Android) it shows "Verizon WiFi Calling".

I can confirm this connection by looking at my wan port and opening Torch.

I can see a connection to Verizon's Calling Gateway on port 4500.

When I am actually on the phone... I can see the packets between when they talk and when I talk.

So...

Look at torch and see if you can find a connection on 500 or 4500 on your BRIDGE coming from your phone. Then track that to the WAN port. Find the IP it connects too...

Then you can start trouble shooting.

Inside the house it's like 1 bar.

So I have wifi calling turned on, in my phone. As soon as I get in range of my wifi my phone flips to wifi calling. I work from home most days and make about 40 calls per day. They all show as over wifi...

Getting to the point.

If I look at my phone and pull down from the top (Android) it shows "Verizon WiFi Calling".

I can confirm this connection by looking at my wan port and opening Torch.

I can see a connection to Verizon's Calling Gateway on port 4500.

When I am actually on the phone... I can see the packets between when they talk and when I talk.

So...

Look at torch and see if you can find a connection on 500 or 4500 on your BRIDGE coming from your phone. Then track that to the WAN port. Find the IP it connects too...

Then you can start trouble shooting.

Re: Cannot dial out wifi-call from mobile phone

I can see the connection of the IP for the port 4500, but how can I track to that to the WAN port?

And what is the next step to trouble shoot?

Please help.

Thanks a lot!!!!

And what is the next step to trouble shoot?

Please help.

Thanks a lot!!!!

Re: Cannot dial out wifi-call from mobile phone

And in particular - the symptoms you describe (outgoing call ringing at the called party but nothing happens when the called party accepts it), it seems as if the answer message (SIP 200 OK) did not make it to your router from the mobile operator's exchange, or the router has failed to forward it to the phone.Then you can start trouble shooting.

So open a command line window to the router, make it as wide as your screen allows, and run

/tool sniffer quick ip-address=ip.of.mobile.exchange port=4500

While there is no call, you should see some keepalive packets every 20 seconds or so. Leave it like that for, say, two minutes, then stop the sniffer (Ctrl-C), do /tool sniffer packet print, copy the output as text and post it here (if you have a public IP on WAN of the router, replace it systematically by my.pub.lic.ip or so before posting).

You should see each packet multiple times - in via wlanX, then in via bridge, then out via ether1 (WAN), and then the response in reverse order, if your RB4011 is more or less in the factory default configuration.

Once we get past this, we can debug the actual issue.

Re: Cannot dial out wifi-call from mobile phone

@sindy

why port 4500?

why port 4500?

Re: Cannot dial out wifi-call from mobile phone

Because WiFi calling = VoWiFi establishes an IPsec tunnel from the phone to the operator's exchange, so that no one could wiretap the calls on the packet network. Apparently this turned out simpler than to deal with SIPS (SIP/TLS) and SRTP.

Re: Cannot dial out wifi-call from mobile phone

This is the result. I just fine tune to include the "via WAN traffic".And in particular - the symptoms you describe (outgoing call ringing at the called party but nothing happens when the called party accepts it), it seems as if the answer message (SIP 200 OK) did not make it to your router from the mobile operator's exchange, or the router has failed to forward it to the phone.Then you can start trouble shooting.

So open a command line window to the router, make it as wide as your screen allows, and run

/tool sniffer quick ip-address=ip.of.mobile.exchange port=4500

While there is no call, you should see some keepalive packets every 20 seconds or so. Leave it like that for, say, two minutes, then stop the sniffer (Ctrl-C), do /tool sniffer packet print, copy the output as text and post it here (if you have a public IP on WAN of the router, replace it systematically by my.pub.lic.ip or so before posting).

You should see each packet multiple times - in via wlanX, then in via bridge, then out via ether1 (WAN), and then the response in reverse order, if your RB4011 is more or less in the factory default configuration.

Once we get past this, we can debug the actual issue.

/tool/sniffer/packet/print

Columns: TIME, INTERFACE, SRC-ADDRESS, DST-ADDRESS, IP-PROTOCOL, SIZE, CPU

# TIME INTERFACE SRC-ADDRESS DST-ADDRESS IP-PROTOCOL SIZE CPU

0 6.581 ether10 192.168.1.8:4500 dest-pub-ip:4500 udp 60 2

1 6.581 local 192.168.1.8:4500 dest-pub-ip:4500 udp 60 2

2 6.581 ether1 src-pub-ip:4500 dest-pub-ip:4500 udp 43 2

3 22.057 ether10 192.168.1.8:4500 dest-pub-ip:4500 udp 122 2

4 22.057 local 192.168.1.8:4500 dest-pub-ip:4500 udp 122 2

5 22.057 ether1 src-pub-ip:4500 dest-pub-ip:4500 udp 122 2

6 22.062 ether1 dest-pub-ip:4500 src-pub-ip:4500 udp 122 2

7 22.062 local dest-pub-ip:4500 192.168.1.8:4500 udp 122 2

8 22.062 ether10 dest-pub-ip:4500 192.168.1.8:4500 udp 122 2

9 22.098 ether10 192.168.1.8:4500 dest-pub-ip:4500 udp 60 2

10 22.098 local 192.168.1.8:4500 dest-pub-ip:4500 udp 60 2

11 22.098 ether1 src-pub-ip:4500 dest-pub-ip:4500 udp 43 2

12 40.301 ether10 192.168.1.8:4500 dest-pub-ip:4500 udp 122 2

13 40.301 local 192.168.1.8:4500 dest-pub-ip:4500 udp 122 2

14 40.301 ether1 src-pub-ip:4500 dest-pub-ip:4500 udp 122 2

15 40.304 ether1 dest-pub-ip:4500 src-pub-ip:4500 udp 122 2

16 40.304 local dest-pub-ip:4500 192.168.1.8:4500 udp 122 2

17 40.304 ether10 dest-pub-ip:4500 192.168.1.8:4500 udp 122 2

18 41.548 ether10 192.168.1.8:4500 dest-pub-ip:4500 udp 60 2

19 41.548 local 192.168.1.8:4500 dest-pub-ip:4500 udp 60 2

20 41.548 ether1 src-pub-ip:4500 dest-pub-ip:4500 udp 43 2

21 49.43 ether10 192.168.1.8:4500 dest-pub-ip:4500 udp 122 2

22 49.43 local 192.168.1.8:4500 dest-pub-ip:4500 udp 122 2

23 49.43 ether1 src-pub-ip:4500 dest-pub-ip:4500 udp 122 2

24 49.433 ether1 dest-pub-ip:4500 src-pub-ip:4500 udp 122 2

25 49.434 local dest-pub-ip:4500 192.168.1.8:4500 udp 122 2

26 49.434 ether10 dest-pub-ip:4500 192.168.1.8:4500 udp 122 2

Any idea?

Thanks a lot.

Re: Cannot dial out wifi-call from mobile phone

OK. This shows that the phone has a local address of 192.168.1.8, is connected via a WiFi AP connected via ether10, ether10 is a member port of bridge local, and ether1 is a WAN.

So now you can run the sniffer again, not restricting it to a particular interface, try the outgoing call, and try to answer the call on the called phone. Since the traffic is encrypted, you cannot identify the SIP registration updates and call control messages and RTP media packets from one another and from the IPsec keepalive traffic by anything else but size. But all the SIP and RTP packets should be larger than the 122 bytes of the IPsec DPDs you can see in the "idle time" capture as above. Which means that when printing the captured packets, you'll use /tool/sniffer/packet/print where size>122 in order to get rid of the "background noise" and only see the SIP & RTP.

So the pattern we are looking for is the following:

If the router did nothing wrong, it must be the exchange, the phone, or the wireless AP.

What can interfere with the message exchange outlined above is the periodic SIP registration, which has its own timing, independent from the call establishing messages. So better to do the complete procedure (two separate sniffings) for two calls and compare the results. You can filter out also the RTP by size to have only the SIP packets printed, or you can sniff into files and open them using Wireshark for better filtering and graphing possibilities.

As you specifically mention outgoing calls, do I read it right that incoming calls work normally for this phone and this home network? What about outgoing calls in another wireless network?

So now you can run the sniffer again, not restricting it to a particular interface, try the outgoing call, and try to answer the call on the called phone. Since the traffic is encrypted, you cannot identify the SIP registration updates and call control messages and RTP media packets from one another and from the IPsec keepalive traffic by anything else but size. But all the SIP and RTP packets should be larger than the 122 bytes of the IPsec DPDs you can see in the "idle time" capture as above. Which means that when printing the captured packets, you'll use /tool/sniffer/packet/print where size>122 in order to get rid of the "background noise" and only see the SIP & RTP.

So the pattern we are looking for is the following:

- as you press "call", there should be a single large message from the phone to the exchange (the INVITE), responded by a multiple smaller ones from the exchange (100 Trying, 180 Ringing, 183 Media Change - cannot say whether all of them will be there, but at least one should)

- many same-size packets (RTP ones) should follow, carrying the alerting tone, unless the exchange asks the phone to generate the tone - both cases are possible

- once you answer the called phone, one packet larger than the RTP ones should be seen from the exchange to the phone (200 OK), "responded" with another "larger-than-RTP" one from the phone (ACK). Following the 200 OK, the RTP should start flowing in both directions.

If the router did nothing wrong, it must be the exchange, the phone, or the wireless AP.

What can interfere with the message exchange outlined above is the periodic SIP registration, which has its own timing, independent from the call establishing messages. So better to do the complete procedure (two separate sniffings) for two calls and compare the results. You can filter out also the RTP by size to have only the SIP packets printed, or you can sniff into files and open them using Wireshark for better filtering and graphing possibilities.

As you specifically mention outgoing calls, do I read it right that incoming calls work normally for this phone and this home network? What about outgoing calls in another wireless network?

Re: Cannot dial out wifi-call from mobile phone

Incoming calls work normally for this phone in this home network.OK. This shows that the phone has a local address of 192.168.1.8, is connected via a WiFi AP connected via ether10, ether10 is a member port of bridge local, and ether1 is a WAN.

So now you can run the sniffer again, not restricting it to a particular interface, try the outgoing call, and try to answer the call on the called phone. Since the traffic is encrypted, you cannot identify the SIP registration updates and call control messages and RTP media packets from one another and from the IPsec keepalive traffic by anything else but size. But all the SIP and RTP packets should be larger than the 122 bytes of the IPsec DPDs you can see in the "idle time" capture as above. Which means that when printing the captured packets, you'll use /tool/sniffer/packet/print where size>122 in order to get rid of the "background noise" and only see the SIP & RTP.

So the pattern we are looking for is the following:What we are looking for is whether the 200 OK arrives to ether1 (WAN) and whether the router delivers it all the way to ether10. That's basically all we can find - if it does, the only other wrongdoing of the router could be that it malforms the contents of the packet as delivering it; to find out, it would be necessary to sniff into a file, open the file using Wireshark, and compare the payload of the two packets - the Ethernet and IP headers will be different due to NAT and different MAC addresses.

- as you press "call", there should be a single large message from the phone to the exchange (the INVITE), responded by a multiple smaller ones from the exchange (100 Trying, 180 Ringing, 183 Media Change - cannot say whether all of them will be there, but at least one should)

- many same-size packets (RTP ones) should follow, carrying the alerting tone, unless the exchange asks the phone to generate the tone - both cases are possible

- once you answer the called phone, one packet larger than the RTP ones should be seen from the exchange to the phone (200 OK), "responded" with another "larger-than-RTP" one from the phone (ACK). Following the 200 OK, the RTP should start flowing in both directions.

If the router did nothing wrong, it must be the exchange, the phone, or the wireless AP.

What can interfere with the message exchange outlined above is the periodic SIP registration, which has its own timing, independent from the call establishing messages. So better to do the complete procedure (two separate sniffings) for two calls and compare the results. You can filter out also the RTP by size to have only the SIP packets printed, or you can sniff into files and open them using Wireshark for better filtering and graphing possibilities.

As you specifically mention outgoing calls, do I read it right that incoming calls work normally for this phone and this home network? What about outgoing calls in another wireless network?

Incoming and outgoing calls work normally for this phone in other network.

Only outgoing calls work abnormally for this phone in this home network.

I will try the outgoing call again in these days and let you know the result.

Thanks a lot for your help!!!!!!

Re: Cannot dial out wifi-call from mobile phone

# TIME INTERFACE SRC-ADDRESS DST-ADDRESS IP-PROTOCOL SIZE CPU

0 19.502 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

1 19.53 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

2 19.53 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

3 19.53 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

4 19.555 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

5 19.555 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

6 19.555 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

7 19.561 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

8 19.561 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

9 19.561 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

10 19.609 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

11 19.609 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

12 19.609 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

13 19.609 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

14 19.609 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

15 19.609 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

16 19.622 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

17 19.622 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

18 19.622 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

19 19.642 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

20 19.642 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

21 19.642 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

22 19.662 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

23 19.662 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

24 19.662 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

25 19.682 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

26 19.682 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

27 19.682 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

28 19.702 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

29 19.702 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

30 19.702 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

31 19.733 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

32 19.733 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

33 19.733 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

34 19.743 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

35 19.743 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

36 19.743 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

37 19.762 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

38 19.762 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

39 19.762 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

40 19.793 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

41 19.793 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

42 19.793 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

43 19.802 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

44 19.802 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

45 19.802 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

46 19.822 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

47 19.822 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

48 19.822 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

49 19.853 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

50 19.853 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

51 19.853 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

52 19.862 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

53 19.862 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

54 19.862 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

55 19.882 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

56 19.882 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

57 19.882 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

58 19.914 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

59 19.914 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

60 19.914 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

61 19.922 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

62 19.922 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

63 19.922 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

64 19.941 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

65 19.941 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

66 19.941 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

67 19.977 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

68 19.977 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

69 19.977 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

70 19.981 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

71 19.981 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

72 19.981 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

73 20.012 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

74 20.012 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

75 20.012 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

76 20.029 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

77 20.029 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

78 20.029 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

79 20.058 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

80 20.058 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

81 20.058 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

82 20.061 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

83 20.061 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

84 20.061 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

85 20.082 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

86 20.082 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

87 20.082 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

88 20.102 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

89 20.102 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

90 20.102 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

91 20.122 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

92 20.122 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

93 20.122 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

94 20.142 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

95 20.142 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

96 20.142 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

97 20.162 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

98 20.162 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

99 20.162 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

100 20.182 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

101 20.182 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

102 20.182 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

103 20.189 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 302 >

104 20.189 local dst-pub-ip:4500 192.168.1.8:4500 udp 302 >

105 20.189 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 302 >

106 20.189 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 1514 >

107 20.189 local dst-pub-ip:4500 192.168.1.8:4500 udp 1582 >

108 20.189 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 1582 >

109 20.202 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

110 20.202 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

111 20.202 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

112 20.222 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

113 20.222 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

114 20.222 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

115 20.242 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

116 20.242 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

117 20.242 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

118 20.282 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

119 20.282 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

120 20.282 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

121 20.282 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

122 20.282 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

123 20.282 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

124 20.302 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

125 20.302 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

126 20.302 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

127 20.322 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

128 20.322 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

129 20.322 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

130 20.343 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

131 20.343 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

132 20.343 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

133 20.361 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

134 20.361 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

135 20.361 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

136 20.382 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

137 20.382 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

138 20.382 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

139 20.403 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

140 20.403 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

141 20.403 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

142 20.422 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

143 20.422 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

144 20.422 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

145 20.442 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

146 20.442 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

147 20.442 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

148 20.469 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

149 20.469 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

150 20.469 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

151 20.482 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

152 20.482 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

153 20.482 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

154 20.524 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 158 >

155 20.524 local dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

156 20.524 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

157 20.561 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 158 >

158 20.561 local dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

159 20.561 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

160 20.722 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 158 >

161 20.722 local dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

162 20.722 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

163 20.882 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 158 >

164 20.882 local dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

165 20.882 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

166 21.048 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 158 >

167 21.048 local dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

168 21.048 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

169 21.181 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

170 21.181 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

171 21.181 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

172 21.212 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

173 21.212 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

174 21.212 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

175 21.221 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 158 >

176 21.221 local dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

177 21.221 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

178 21.272 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

179 21.272 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

180 21.272 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

181 21.282 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 158 >

182 21.282 local dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

183 21.282 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

184 21.341 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

185 21.341 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

186 21.341 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

187 21.362 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

188 21.362 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

189 21.362 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

190 21.392 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

191 21.392 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

192 21.392 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

193 21.402 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

194 21.402 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

195 21.402 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

196 21.421 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

197 21.421 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

198 21.421 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

199 21.451 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

200 21.451 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

201 21.451 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

202 21.462 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

203 21.462 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

204 21.462 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

205 21.481 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

206 21.481 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

207 21.481 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

208 21.511 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

209 21.511 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

210 21.511 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

211 21.525 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

212 21.525 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

213 21.525 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

214 21.551 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 158 >

215 21.551 local dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

216 21.551 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

217 21.612 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 158 >

218 21.612 local dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

219 21.612 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

220 21.621 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

221 21.621 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

222 21.621 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

223 21.643 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

224 21.643 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

225 21.643 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

226 21.682 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

227 21.682 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

228 21.682 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

229 21.682 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

230 21.682 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

231 21.682 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

232 21.702 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

233 21.702 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

234 21.702 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

235 21.722 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

236 21.722 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

237 21.722 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

238 21.748 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

239 21.748 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

240 21.748 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

241 21.762 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

242 21.762 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

243 21.762 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

244 21.782 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

245 21.782 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

246 21.782 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

247 21.809 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

248 21.809 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

249 21.809 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

250 21.821 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

251 21.821 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

252 21.821 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

253 21.841 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

254 21.841 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

255 21.841 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

256 21.869 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

257 21.869 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

258 21.869 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

259 21.882 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

260 21.882 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

261 21.882 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

262 21.902 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

263 21.902 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

264 21.902 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

265 21.929 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

266 21.929 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

267 21.929 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

268 21.942 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

269 21.942 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

270 21.942 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

271 21.963 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

272 21.963 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

273 21.963 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

274 21.989 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

275 21.989 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

276 21.989 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

277 22.019 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

278 22.019 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

279 22.019 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

280 22.022 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

281 22.022 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

282 22.022 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

283 22.052 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

284 22.052 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

285 22.052 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

286 22.059 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

287 22.059 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

288 22.059 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

289 22.079 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

290 22.079 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

291 22.079 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

292 22.111 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

293 22.111 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

294 22.111 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

295 22.119 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

296 22.119 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

297 22.119 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

298 22.14 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

299 22.14 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

300 22.14 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

301 22.171 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

302 22.171 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

303 22.171 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

304 22.18 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

305 22.18 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

306 22.181 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

307 22.203 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

308 22.203 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

309 22.203 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

310 22.23 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

311 22.23 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

312 22.23 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

313 22.242 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

314 22.242 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

315 22.242 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

316 22.262 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

317 22.262 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

318 22.262 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

319 22.289 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

320 22.289 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

321 22.289 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

322 22.302 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

323 22.302 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

324 22.302 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

325 22.322 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

326 22.322 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

327 22.322 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

328 22.348 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

329 22.348 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

330 22.348 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

331 22.36 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

332 22.36 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

333 22.36 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

334 22.382 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

335 22.382 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

336 22.383 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

337 22.411 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

338 22.411 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

339 22.411 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

340 22.421 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

341 22.421 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

342 22.421 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

343 22.441 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

344 22.441 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

345 22.441 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

346 22.466 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

347 22.466 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

348 22.466 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

349 22.479 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

350 22.479 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

351 22.479 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

352 22.501 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

353 22.501 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

354 22.501 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

355 22.52 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

356 22.52 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

357 22.52 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

358 22.557 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

359 22.557 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

360 22.557 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

361 22.561 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

362 22.561 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

363 22.561 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

364 22.58 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

365 22.58 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

366 22.58 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

367 22.599 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

368 22.599 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

369 22.599 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

370 22.621 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

371 22.621 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

372 22.621 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

373 22.639 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

374 22.639 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

375 22.639 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

376 22.66 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

377 22.66 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

378 22.66 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

379 22.682 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

380 22.682 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

381 22.682 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

382 22.738 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

383 22.738 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

384 22.738 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

385 22.738 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

386 22.738 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

387 22.738 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

388 22.742 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

389 22.742 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

390 22.742 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

391 22.761 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

392 22.761 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

393 22.761 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

394 22.802 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

395 22.802 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

396 22.802 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

397 22.802 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

398 22.802 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

399 22.802 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

400 22.822 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

401 22.822 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

402 22.822 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

403 22.862 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

404 22.862 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

405 22.862 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

406 22.863 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

407 22.863 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

408 22.863 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

409 22.884 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

410 22.884 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

411 22.884 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

412 22.901 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

413 22.901 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

414 22.901 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

415 22.923 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

416 22.923 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

417 22.923 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

418 22.953 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

419 22.953 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

420 22.953 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

421 22.961 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

422 22.961 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

423 22.961 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

424 22.982 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

425 22.982 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

426 22.982 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

427 23.013 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

428 23.013 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

429 23.013 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

430 23.035 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

431 23.035 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

432 23.035 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

433 23.041 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

434 23.041 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

435 23.041 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

436 23.061 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

437 23.061 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

438 23.061 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

439 23.085 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

440 23.085 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

441 23.085 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

442 23.118 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

443 23.118 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

444 23.118 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

445 23.119 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

446 23.119 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

447 23.119 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

448 24.191 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 302 >

449 24.191 local dst-pub-ip:4500 192.168.1.8:4500 udp 302 >

450 24.191 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 302 >

451 24.191 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 1514 >

452 24.191 local dst-pub-ip:4500 192.168.1.8:4500 udp 1582 >

453 24.191 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 1582 >

454 28.191 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 302 >

455 28.191 local dst-pub-ip:4500 192.168.1.8:4500 udp 302 >

456 28.191 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 302 >

457 28.191 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 1514 >

458 28.191 local dst-pub-ip:4500 192.168.1.8:4500 udp 1582 >

459 28.191 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 1582 >

460 28.826 ether10 192.168.1.8:4500 dst-pub-ip:4500 udp 60 >

461 28.826 local 192.168.1.8:4500 dst-pub-ip:4500 udp 60 >

462 28.826 ether1 src-pub-ip:4500 dst-pub-ip:4500 udp 43 >

463 31.747 ether10 192.168.1.8:4500 dst-pub-ip:4500 udp 990 >

464 31.747 local 192.168.1.8:4500 dst-pub-ip:4500 udp 990 >

465 31.747 ether1 src-pub-ip:4500 dst-pub-ip:4500 udp 990 >

466 31.753 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 654 >

467 31.753 local dst-pub-ip:4500 192.168.1.8:4500 udp 654 >

468 31.753 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 654 >

469 32.197 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 302 >

470 32.197 local dst-pub-ip:4500 192.168.1.8:4500 udp 302 >

471 32.197 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 302 >

472 32.197 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 1514 >

473 32.197 local dst-pub-ip:4500 192.168.1.8:4500 udp 1582 >

474 32.197 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 1582 >

This is a outgoing call capture, any ideas?

0 19.502 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

1 19.53 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

2 19.53 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

3 19.53 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

4 19.555 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

5 19.555 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

6 19.555 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

7 19.561 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

8 19.561 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

9 19.561 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

10 19.609 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

11 19.609 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

12 19.609 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

13 19.609 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

14 19.609 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

15 19.609 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

16 19.622 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

17 19.622 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

18 19.622 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

19 19.642 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

20 19.642 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

21 19.642 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

22 19.662 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

23 19.662 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

24 19.662 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

25 19.682 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

26 19.682 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

27 19.682 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

28 19.702 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

29 19.702 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

30 19.702 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

31 19.733 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

32 19.733 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

33 19.733 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

34 19.743 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

35 19.743 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

36 19.743 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

37 19.762 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

38 19.762 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

39 19.762 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

40 19.793 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

41 19.793 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

42 19.793 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

43 19.802 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

44 19.802 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

45 19.802 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

46 19.822 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

47 19.822 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

48 19.822 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

49 19.853 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

50 19.853 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

51 19.853 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

52 19.862 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

53 19.862 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

54 19.862 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

55 19.882 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

56 19.882 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

57 19.882 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

58 19.914 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

59 19.914 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

60 19.914 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

61 19.922 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

62 19.922 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

63 19.922 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

64 19.941 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

65 19.941 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

66 19.941 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

67 19.977 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

68 19.977 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

69 19.977 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

70 19.981 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

71 19.981 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

72 19.981 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

73 20.012 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

74 20.012 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

75 20.012 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

76 20.029 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

77 20.029 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

78 20.029 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

79 20.058 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

80 20.058 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

81 20.058 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

82 20.061 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

83 20.061 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

84 20.061 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

85 20.082 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

86 20.082 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

87 20.082 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

88 20.102 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

89 20.102 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

90 20.102 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

91 20.122 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

92 20.122 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

93 20.122 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

94 20.142 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

95 20.142 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

96 20.142 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

97 20.162 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 0

98 20.162 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

99 20.162 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 0

100 20.182 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

101 20.182 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

102 20.182 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

103 20.189 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 302 >

104 20.189 local dst-pub-ip:4500 192.168.1.8:4500 udp 302 >

105 20.189 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 302 >

106 20.189 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 1514 >

107 20.189 local dst-pub-ip:4500 192.168.1.8:4500 udp 1582 >

108 20.189 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 1582 >

109 20.202 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

110 20.202 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

111 20.202 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

112 20.222 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

113 20.222 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

114 20.222 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

115 20.242 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

116 20.242 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

117 20.242 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

118 20.282 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

119 20.282 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

120 20.282 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

121 20.282 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

122 20.282 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

123 20.282 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

124 20.302 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

125 20.302 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

126 20.302 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

127 20.322 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

128 20.322 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

129 20.322 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

130 20.343 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

131 20.343 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

132 20.343 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

133 20.361 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

134 20.361 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

135 20.361 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

136 20.382 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

137 20.382 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

138 20.382 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

139 20.403 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

140 20.403 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

141 20.403 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

142 20.422 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

143 20.422 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

144 20.422 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

145 20.442 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

146 20.442 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

147 20.442 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

148 20.469 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

149 20.469 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

150 20.469 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

151 20.482 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

152 20.482 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

153 20.482 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

154 20.524 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 158 >

155 20.524 local dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

156 20.524 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

157 20.561 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 158 >

158 20.561 local dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

159 20.561 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

160 20.722 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 158 >

161 20.722 local dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

162 20.722 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

163 20.882 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 158 >

164 20.882 local dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

165 20.882 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

166 21.048 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 158 >

167 21.048 local dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

168 21.048 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

169 21.181 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

170 21.181 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

171 21.181 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

172 21.212 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

173 21.212 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

174 21.212 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

175 21.221 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 158 >

176 21.221 local dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

177 21.221 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

178 21.272 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

179 21.272 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

180 21.272 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

181 21.282 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 158 >

182 21.282 local dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

183 21.282 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

184 21.341 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

185 21.341 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

186 21.341 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

187 21.362 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

188 21.362 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

189 21.362 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

190 21.392 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

191 21.392 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

192 21.392 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

193 21.402 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

194 21.402 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

195 21.402 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

196 21.421 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

197 21.421 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

198 21.421 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

199 21.451 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

200 21.451 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

201 21.451 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

202 21.462 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

203 21.462 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

204 21.462 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

205 21.481 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

206 21.481 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

207 21.481 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

208 21.511 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

209 21.511 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

210 21.511 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

211 21.525 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

212 21.525 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

213 21.525 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

214 21.551 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 158 >

215 21.551 local dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

216 21.551 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

217 21.612 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 158 >

218 21.612 local dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

219 21.612 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 158 >

220 21.621 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

221 21.621 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

222 21.621 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

223 21.643 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

224 21.643 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

225 21.643 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

226 21.682 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

227 21.682 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

228 21.682 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

229 21.682 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

230 21.682 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

231 21.682 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

232 21.702 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

233 21.702 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

234 21.702 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

235 21.722 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

236 21.722 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

237 21.722 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

238 21.748 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

239 21.748 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

240 21.748 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

241 21.762 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

242 21.762 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

243 21.762 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

244 21.782 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

245 21.782 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

246 21.782 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

247 21.809 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

248 21.809 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

249 21.809 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

250 21.821 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

251 21.821 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

252 21.821 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

253 21.841 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

254 21.841 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

255 21.841 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

256 21.869 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

257 21.869 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

258 21.869 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

259 21.882 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

260 21.882 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

261 21.882 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

262 21.902 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

263 21.902 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

264 21.902 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

265 21.929 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

266 21.929 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

267 21.929 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

268 21.942 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

269 21.942 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

270 21.942 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

271 21.963 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

272 21.963 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

273 21.963 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

274 21.989 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

275 21.989 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

276 21.989 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

277 22.019 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

278 22.019 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

279 22.019 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

280 22.022 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

281 22.022 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

282 22.022 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

283 22.052 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

284 22.052 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

285 22.052 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

286 22.059 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

287 22.059 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

288 22.059 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

289 22.079 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

290 22.079 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

291 22.079 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

292 22.111 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

293 22.111 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

294 22.111 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

295 22.119 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

296 22.119 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

297 22.119 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

298 22.14 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

299 22.14 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

300 22.14 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

301 22.171 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

302 22.171 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

303 22.171 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

304 22.18 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

305 22.18 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

306 22.181 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

307 22.203 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

308 22.203 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

309 22.203 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

310 22.23 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

311 22.23 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

312 22.23 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

313 22.242 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

314 22.242 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

315 22.242 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

316 22.262 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

317 22.262 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

318 22.262 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

319 22.289 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

320 22.289 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

321 22.289 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

322 22.302 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

323 22.302 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

324 22.302 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

325 22.322 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

326 22.322 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

327 22.322 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

328 22.348 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

329 22.348 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

330 22.348 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

331 22.36 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

332 22.36 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

333 22.36 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

334 22.382 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

335 22.382 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

336 22.383 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

337 22.411 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

338 22.411 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

339 22.411 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

340 22.421 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

341 22.421 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

342 22.421 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

343 22.441 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

344 22.441 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

345 22.441 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

346 22.466 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

347 22.466 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

348 22.466 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

349 22.479 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

350 22.479 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

351 22.479 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

352 22.501 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

353 22.501 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

354 22.501 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

355 22.52 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

356 22.52 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

357 22.52 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

358 22.557 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

359 22.557 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

360 22.557 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

361 22.561 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

362 22.561 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

363 22.561 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

364 22.58 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

365 22.58 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

366 22.58 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

367 22.599 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

368 22.599 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

369 22.599 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

370 22.621 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

371 22.621 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

372 22.621 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

373 22.639 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

374 22.639 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

375 22.639 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

376 22.66 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

377 22.66 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

378 22.66 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

379 22.682 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

380 22.682 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

381 22.682 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

382 22.738 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

383 22.738 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

384 22.738 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

385 22.738 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

386 22.738 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

387 22.738 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

388 22.742 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

389 22.742 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

390 22.742 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

391 22.761 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

392 22.761 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

393 22.761 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

394 22.802 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

395 22.802 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

396 22.802 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

397 22.802 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

398 22.802 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

399 22.802 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

400 22.822 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

401 22.822 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

402 22.822 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

403 22.862 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

404 22.862 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

405 22.862 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

406 22.863 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

407 22.863 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

408 22.863 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

409 22.884 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

410 22.884 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

411 22.884 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

412 22.901 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

413 22.901 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

414 22.901 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

415 22.923 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

416 22.923 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

417 22.923 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

418 22.953 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

419 22.953 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

420 22.953 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

421 22.961 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

422 22.961 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

423 22.961 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

424 22.982 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

425 22.982 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

426 22.982 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

427 23.013 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

428 23.013 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

429 23.013 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

430 23.035 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

431 23.035 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

432 23.035 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

433 23.041 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

434 23.041 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

435 23.041 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

436 23.061 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

437 23.061 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

438 23.061 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

439 23.085 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

440 23.085 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

441 23.085 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

442 23.118 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

443 23.118 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

444 23.118 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

445 23.119 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 174 >

446 23.119 local dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

447 23.119 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 174 >

448 24.191 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 302 >

449 24.191 local dst-pub-ip:4500 192.168.1.8:4500 udp 302 >

450 24.191 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 302 >

451 24.191 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 1514 >

452 24.191 local dst-pub-ip:4500 192.168.1.8:4500 udp 1582 >

453 24.191 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 1582 >

454 28.191 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 302 >

455 28.191 local dst-pub-ip:4500 192.168.1.8:4500 udp 302 >

456 28.191 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 302 >

457 28.191 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 1514 >

458 28.191 local dst-pub-ip:4500 192.168.1.8:4500 udp 1582 >

459 28.191 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 1582 >

460 28.826 ether10 192.168.1.8:4500 dst-pub-ip:4500 udp 60 >

461 28.826 local 192.168.1.8:4500 dst-pub-ip:4500 udp 60 >

462 28.826 ether1 src-pub-ip:4500 dst-pub-ip:4500 udp 43 >

463 31.747 ether10 192.168.1.8:4500 dst-pub-ip:4500 udp 990 >

464 31.747 local 192.168.1.8:4500 dst-pub-ip:4500 udp 990 >

465 31.747 ether1 src-pub-ip:4500 dst-pub-ip:4500 udp 990 >

466 31.753 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 654 >

467 31.753 local dst-pub-ip:4500 192.168.1.8:4500 udp 654 >

468 31.753 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 654 >

469 32.197 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 302 >

470 32.197 local dst-pub-ip:4500 192.168.1.8:4500 udp 302 >

471 32.197 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 302 >

472 32.197 ether1 dst-pub-ip:4500 src-pub-ip:4500 udp 1514 >

473 32.197 local dst-pub-ip:4500 192.168.1.8:4500 udp 1582 >

474 32.197 ether10 dst-pub-ip:4500 192.168.1.8:4500 udp 1582 >

This is a outgoing call capture, any ideas?

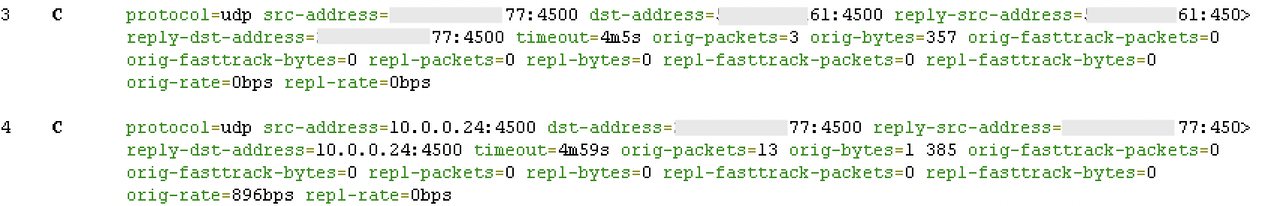

Re: Cannot dial out wifi-call from mobile phone [SOLVED]

Yes. What happens is that the exchange sends a packet so large that it exceeds the L2 MTU of the AP or the phone, or the L3 MTU of the phone, and the L3 MTU configuration on bridge local is too optimistic so the router sends that packet to the phone without fragmentation.