Hi Amm0 and Tangent. Once again, excellent feedback.

How would I go about to run the cmd or shell options?

I see the cmd option at the container specification option, but now sure what to put in there?

I am stuck

Herewith the latest update, setup and status. With the below, the container is running (stays running), but I am still unable to ping it / access it. I think it is in effect “working”, but just unable to initialize the network portion…

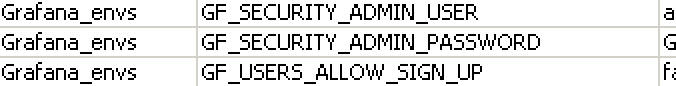

container/envs/print

3 name=“librenms_envs” key=“TZ” value=“Africa/Johannesburg”

4 name=“librenms_envs” key=“MYSQL_ALLOW_EMPTY_PASSWORD” value=“yes”

5 name=“librenms_envs” key=“DB_NAME” value=“librenmsdb”

6 name=“librenms_envs” key=“DB_PASSWORD” value=“xxxxx”

8 name=“mariadb_envs” key=“MARIADB_USER” value=“librenms”

9 name=“mariadb_envs” key=“MARIADB_PASSWORD” value=“xxxxx”

10 name=“mariadb_envs” key=“MARIADB_DATABASE” value=“librenmsdb”

11 name=“mariadb_envs” key=“MARIADB_ROOT_PASSWORD” value=“xxxxx”

12 name=“librenms_envs” key=“DB_HOST” value=“172.xxx.1.7”

13 name=“librenms_envs” key=“DB_USER” value=“librenms”

14 name=“librenms_envs” key=“DB_PORT” value=“3306”

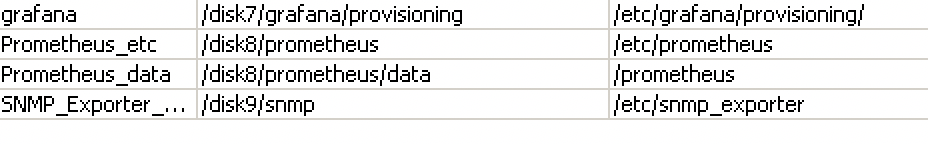

container/mounts/print

4 name=“mariadb_data” src=“/disk5/mariadb/data” dst=“/var/lib/mariadb”

5 name=“mariadb_dump” src=“/disk5/mariadb/dump” dst=“/docker-entrypoint-initdb.d”

6 name=“librenms” src=“/disk4/opt/librenms/data” dst=“/data”

7 name=“opt_librenms” src=“/disk4/opt” dst=“/opt”

container/print

2 name=“c5c14144-2312-445f-86ef-10e74f6a5aa3” tag=“library/mariadb:latest” os=“linux” arch=“arm64” interface=veth5-mariadb

envlist=“mariadb_envs” root-dir=/disk5 mounts=mariadb_data,mariadb_dump dns=“” hostname=“MariaDB” logging=yes

status=running

3 name=“12c5b838-4813-4194-9ed2-ae6c7b444e06” tag=“librenms/librenms:latest” os=“linux” arch=“arm64” interface=veth4-librenms

envlist=“librenms_envs” root-dir=/disk4 mounts=librenms,opt_librenms dns=“” workdir=“/opt/librenms” status=running

The log file from completed import as below. It seems to go through all the steps, and in the bold section below, has a chown error.

However, it seems to continue until it tries to contact the MariaDB server, which, if the network is not working, it will abviously not be able to do…

09:34:28 container,info,debug import successful, container b3e55268-3527-4573-81e3-6c20de4eb91d

09:34:41 container,info,debug [s6-init] making user provided files available at /var/run/s6/etc…exited 0.

09:34:41 container,info,debug [s6-init] ensuring user provided files have correct perms…exited 0.

09:34:41 container,info,debug [fix-attrs.d] applying ownership & permissions fixes…

09:34:41 container,info,debug [fix-attrs.d] done.

09:34:41 container,info,debug [cont-init.d] executing container initialization scripts…

09:34:41 container,info,debug [cont-init.d] 00-fix-logs.sh: executing…

09:34:41 container,info,debug chown: changing ownership of ‘/proc/self/fd/1’: Operation not permitted

09:34:41 container,info,debug chown: changing ownership of ‘/proc/self/fd/2’: Operation not permitted

09:34:41 container,info,debug [cont-init.d] 00-fix-logs.sh: exited 0.

09:34:41 container,info,debug [cont-init.d] 01-fix-uidgid.sh: executing…

09:34:42 container,info,debug [cont-init.d] 01-fix-uidgid.sh: exited 0.

09:34:42 container,info,debug [cont-init.d] 02-fix-perms.sh: executing…

09:34:42 container,info,debug Fixing perms…

09:34:42 container,info,debug [cont-init.d] 02-fix-perms.sh: exited 0.

09:34:42 container,info,debug [cont-init.d] 03-config.sh: executing…

09:34:42 container,info,debug Setting timezone to Africa/Johannesburg…

09:34:42 container,info,debug Setting PHP-FPM configuration…

09:34:42 container,info,debug Setting PHP INI configuration…

09:34:42 container,info,debug Setting OpCache configuration…

09:34:42 container,info,debug Setting Nginx configuration…

09:34:42 container,info,debug Updating SNMP community…

09:34:42 container,info,debug Initializing LibreNMS files / folders…

09:34:42 container,info,debug Setting LibreNMS configuration…

09:34:42 container,info,debug Checking LibreNMS plugins…

09:34:42 container,info,debug Fixing perms…

09:34:42 container,info,debug Checking additional Monitoring plugins…

09:34:42 container,info,debug Checking alert templates…

09:34:42 container,info,debug [cont-init.d] 03-config.sh: exited 0.

09:34:42 container,info,debug [cont-init.d] 04-svc-main.sh: executing…

09:34:42 container,info,debug Generating APP_KEY and unique NODE_ID

09:35:34 container,info,debug Waiting 60s for database to be ready…

09:38:41 container,info,debug ERROR: Failed to connect to database on 172.xxx.1.7

09:38:41 container,info,debug [cont-init.d] 04-svc-main.sh: exited 1.

09:38:41 container,info,debug [cont-finish.d] executing container finish scripts…

09:38:41 container,info,debug [cont-finish.d] done.

09:38:41 container,info,debug [s6-finish] waiting for services.

09:38:42 container,info,debug [s6-finish] sending all processes the TERM signal.

09:38:45 container,info,debug [s6-finish] sending all processes the KILL signal and exiting.

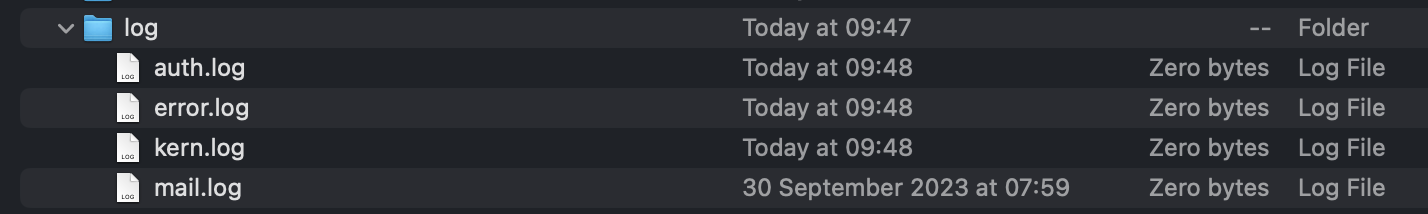

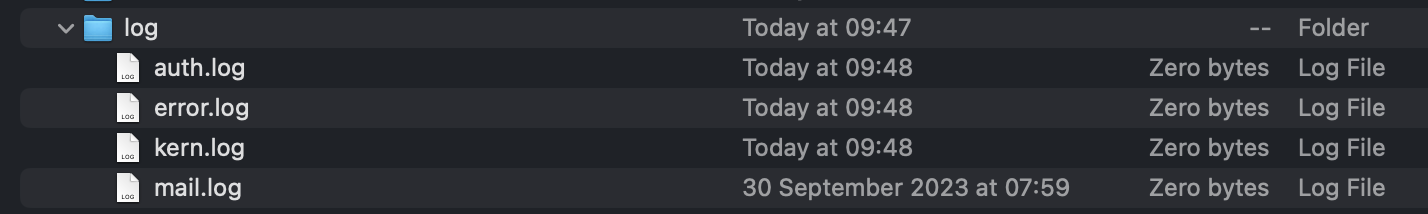

Also below is the contents of /var/log folder. There are files, but all 0 bytes…